Now that it is getting cooler here in the buckeye state my lab gear has been hard at work keeping my office nice and toasty warm. While spewing out BTU’s it has also managed to help me test some new products as well, one of which is AutoCache by Proximal Data.

What is AutoCache?

“Proximal Data’s AutoCache software can dramatically increase virtual machine (VM) density 2x-3x by eliminating I/O bottlenecks with adaptive I/O caching. When coupled with flash PCIe cards or solid state drives (SSDs) from our partners, Proximal Data’s fast virtual cache significantly improves the efficiency and performance of virtualized servers without disrupting IT operations or processes.” –ProximalData.com

“Proximal Data’s AutoCache software can dramatically increase virtual machine (VM) density 2x-3x by eliminating I/O bottlenecks with adaptive I/O caching. When coupled with flash PCIe cards or solid state drives (SSDs) from our partners, Proximal Data’s fast virtual cache significantly improves the efficiency and performance of virtualized servers without disrupting IT operations or processes.” –ProximalData.com

So in geek terms, this means that AutoCache is a lot like giving your servers Red Bull. They are basically using a flash-based storage device in the server to cache as much read I/O as possible so that the SAN isn’t tasked with reading as much data… because the SAN is doing less work reading data it is then freed up to do more writes.

AutoCache is not alone in its niche, other products include hardware solutions such as EMC’s VFCache, or Fusion IO’s IOTurbine. What is different about AutoCache is that Proximal Data is not a hardware company, in fact, they only make the software, and then point you to a list of suggested hardware partners to finish out the B.O.M.

AutoCache in my Home Lab

For testing purposes, I outfitted one of my HP DL360 G5 servers with an Intel 330 solid state drive from MicroCenter for around $100 USD. I put the drive in a simple RAID0 on the P400 RAID controller and off we went. Before I could install the AutoCache software, however, I had to roll my server back to ESXi 5.0 Update 1. (I’m told that vSphere 5.1 support is on the way) After doing so installation was a breeze, I deployed their manager virtual appliance and then ran a few CLI commands on my ESXi host. Everything after that could be done from the web interface (which I might add is simple and easy to use).

The back end storage in my home lab is an EMC VNXe3100 with 6x600GB 15k SAS drives and 6x2TB 7200rpm NL-SAS drives. Configured as a 4+1 RAID 5 for the SAS drives (and 1 hot spare), and a 4+2 RAID 6 for the NL-SAS drives. The servers connect to storage over multiple 1Gbps iSCSI connections using VMware’s Round Robin Path Selection Policy.

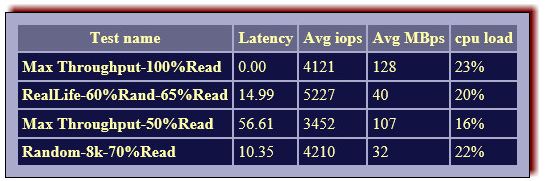

Before I started doing anything with AutoCache I wanted to get an idea of just how fast the Intel 330 120Gb SSD was so I ran IOMeter from a Windows virtual machine that was stored on the drive. The results follow:

Installation

After downloading the installation package I deployed the AutoCache management appliance to my vSphere cluster. Finalizing the virtual appliance setup was just like any other VA…. enter network info, set password, etc etc… I won’t go into details here. I also enabled SSH on the ESXi host which has the SSD in it, Remote CLI and Update Manager are also options for installing the AutoCache VIB, but honestly, for one host, it was just easier to enable SSH.

To get the VIB file installed I ran three simple commands:

esxcli network firewall ruleset set -e=true -r=httpClient esxcli software vib install --no-sig-check --depot=http://<vAppIP>/depot/50/ reboot

After my host rebooted the next step was to register AutoCache with vCenter (which I believe is optional, but its pretty awesome how integrated their solution gets ( more on that later).

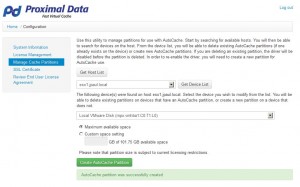

After that I had to install my key and then create the AutoCache partition.

After creating your cache partition you are done! YEP that is all it too, there are no agents to install in your Guest OS’s or any other reconfiguration steps to take.

How AutoCache works

Let me start by saying that the guys from Proximal Data are awesome! My first point of contact was Kyle Murley; Kyle answered emails and tweets at all sorts of hours so I want to thank him for being responsive and helping me get this review started quickly. He also got me in touch with Rory, the CEO and Founder of Proximal Data, so that we could talk more about how AutoCache works and so he could verify what I was seeing in my lab.

Here is what I learned.

- Cache, in general, is a statistics game…. in order for the cache to work we want hot data and cold data, if all data were accessed equally cache would be useless.

- Whether read caching will fit your workload or not is trivial, every data access pattern is different, and some patterns are just not easy for caching solutions and will receive no benefits from read cache solutions so if your workload is primarily writes, or 100% random with very little repeated reads the solution will show little to no benefits

- AutoCache does not cache information the first time it sees it, instead, it waits until it has seen the data several times… this method is used so that your SSD or flash device lasts longer, and so that data that is read once a data does not take up room in cache.

- AutoCache aims to cache that data that would normally cause a “hot spot” on your array, and then make sure that the data is always read from cache and not the disk. Therefore alleviating the hot spot.

- Like other host-based cache solutions, if a VM vMotions to a different host, that host will start from scratch. That particular host will start with a cache size of zero and start looking for blocks to cache.

- AutoCache sits below things like Content Based Read Caching (CBRC) in the VMware disk stack, therefore things that are optimized by CBRC (think VDI) are only slightly improved with AutoCache.

- My normal IOMeter tests were not good workloads for testing a caching solution. The run times were only 5 minutes, and by the time AutoCache saw the data a few times the test was over. Therefore we used a special IOMeter workload ICF file that I will use for testing all other caching solutions I might review in the future.

So what are the numbers ?!?!

Let’s start with some baseline numbers, this first picture is of the VNXe statistics page and on the far right side of the graphs, we can see what load was put on the array during my baseline test. The datastore that was holding the VMDK used for testing was on SPA, and SPA was seeing about 20MB/s of READ traffic during the entire test and about 5MB/s of WRITE traffic during the test.

The baseline results showed about 1500IOps on average for what the VNXe could handle on its own.

Now let’s turn Autocache on and see what we get…

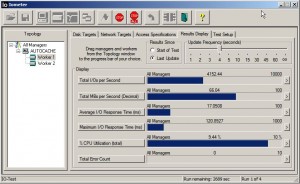

As you can see in these next couple of screenshots AutoCache has very deep integration with VMware, in fact, I would say that its level of integration compares to that of the Tintri Storage array…. you are able to isolation exactly which VM’s and which datastores things are happening to at very granular levels.

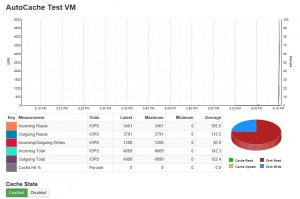

Here are the starting graphs from AutoCache right as the test was starting, as you can see there are no Cache hits because Autocache has not started caching the data it has seen. (Remember it doesn’t start caching until it sees the data a couple of times). Because of that, the red part of the pie graph is very large, this indicates that the reads are coming from the array.

At this point in IOmeter I was seeing about the same IOps as I saw during the test without AutoCache. Let’s fast forward about 3 minutes.

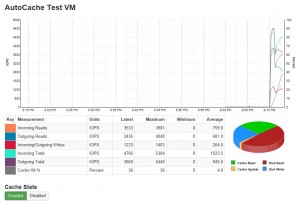

As you can see the Cache Hit % is going up and we are starting to see the Green area of the pie get larger, this indicates that AutoCache is seeing reads for the same blocks of data, and that instead of going to the array it is using the cached copy from flash. On the IOmeter side we I was starting to see IOps reach into the 2500 IOps range…. Fast forward again to the 15 minute mark.

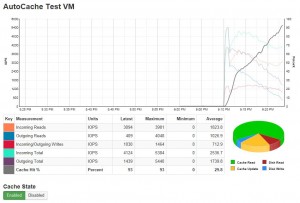

OK as you can see the Cache Hit % keeps climbing… this far into the test we have cached all data that is being read from our “hot spots” and some data even from the cold areas that have been read several times. Below is the IOmeter stats from the 15 minute mark as well…. we are seeing over 4000 IOps!!!! Clearly, the VNXe isn’t doing that alone.

I left this test run for about 60 minutes, and I’m sure you can guess the rest of the story… by the end of it AutoCache was seeing a 100% Cache Hit percentage and IOps were in the 4500 IOps range. If you remember the baseline of the SSD, we were able to get about 4000 IOps out of it so if you assume that all READS were coming from there, that freed the VNXe array up and all it needed to do were the writes. So when you combine the two it’s not hard to believe that 4500 IOps is possible.

But lets take a look at it from the array’s perspective, remember before we need a nice flat read and write line during the entire test. However this time we see the read line start out about the same level as before, but as time goes on and the cache hit % goes up, the read line falls lower and lower. If you click the picture and zoom in you can also see that the write MB/s is also different, as time progresses the rate climbs, in fact at 21:30 on the graphs you see that it actually exceeds the 6MB/s line, which was not accomplished when AutoCache was disabled. (The previous results with AutoCache disabled are on the left side of this graph, there is about a 5 minute break between the AutoCache Disabled test, and the AutoCache enabled test… around the 21:05-21:10 mark)

Conclusions and Thoughts

After playing around with AutoCache for a week or so now and seeing the improvements that it can do for this little VNXe, its going to be hard to turn it off…. I know that every time my lab slows down I’m going to be thinking “boy it would be nice to have that” LOL. Never the less though, the host im using needs to be upgraded back to 5.1 and put back into the mix. So my recommendations are this; if you need to give your cluster a boost but don’t have the option to add FAST Cache or a similar solution that can act as Read and Write cache, then AutoCache is something I would definitely check out.

AutoCache is also very affordable… it is priced per host (not per CPU) and it has a tiered model based on the amount of cache storage you want to use. For example I was using an Intel 330 SSD drive, which is 120GB in capacity; if I were to outfit both of my hosts with SSD’s (2 – in a RAID 1 for production use) and purchase AutoCache I would only set myself back about $2500 bucks. Obviously, way more than I am going to stick out for my lab, but for a production environment that is getting a little slow that is peanuts. I think this would be perfect for SMB environments that are back ended by an HP P2000 or EMC VNXe (or comparable models from other vendors) that do not have the ability to add flash drives into the array as cache.

*As always this was not a paid review, it was just something I wanted to follow up with that I seen at VMworld.

![]()

Very interesting alternative to those who cannot afford the VNX and the Fast Cache option!

Neat post. IOmeter is not necessarily the best choice for testing caching software because the workloads that it generates are not realistic. Depending on the settings, it may write and read all 0’s to the same locations on disk. That may explain the 100% cache hit rate that you experienced.

Did you happen to see whether AutoCache was able to support array-based snapshot rollback?

what HBA are you using to get access to your iscsi storage , from what i can see the swiscsi iniator doesn work with the caching for me.

Regards

I Use Brodcom 5709 network cards which have a hardware iSCSI initiator on them.

I did not try array based snapshot rollbacks…. as for IOmeter i worked with the guys at AutoCache to help develop an IOmeter workload that caused both hot and cold areas on the disk to try and get something as close to realistic as possible. In their testing they do not use IOmeter, but instead they use another tool that is able to playback real workloads.

Justin, do you mind sharing your ICF? Thanks!

Just out of curiosity, how did you manage to get your trial license ? Going through the standard ways, I’ve gotten in touch with people very motivated to meet to try and sell the product, but have yet to see anything looking like a license.

Being a blogger definitely helps. Ill dig up some contact info and forward it to the email that you used on your comment.

Download a Free Evaluation of Award Winning AutoCache software:

http://www.proximaldata.com/product/try_us_program.php

Hi Justin, are you able to share details of your ICF file configuration ? Thanks