Veeam is a disk to disk backup solution which makes restore times much faster than tape backups, but one of the drawbacks is the massive amount of disk space required to keep full backup images for long periods of time (as with any disk to disk backup software). Many people I talk to don’t realize that if they keep a month of tapes, and their full backup is 500GB (for example) then when they move to disk to disk backups they will need over 2TB of disk space. And of course as your retention period grows so does your disk space requirements.

So what is EMC Data Domain? Data Domain is a product which involves both hardware and software, it comes as a 2u or more hardware appliance which has their deduplication software running on it, it is linux based but provides CIFS, NFS, and VTL interfaces for getting your backup data on to the appliance. At its most basic definition a Data Domain is just a linux box with hard drives in it that stores your data…but its the compression and deduplication software they have on it that makes it awesome.

So how does it work with Veeam?

In version 6 of Veeam Backup and Replication we were introduced to the idea of Backup Repositories, and a Backup Repository is nothing more than a destination for your Veeam backups. A repository can be a CIFS share, a Windows local drive, or a Linux Server; when using a Data Domain we will use the CIFS server option. More on how to set up a repository in a minute, but first lets look at how to set up the Data Domain Side of things.

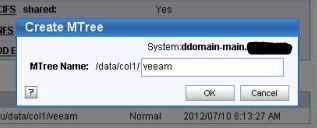

Step 1. Create an MTree

Think of an MTree like a directory, data is stored in it and it can be shared via CIFS protocol. To create an MTree we login to our DD Appliance and go to “Data Management -> MTree”, there we click “Create” and the only thing we need to do is fill in the name of the MTree we want to make. You will want to make a note of your MTree name for when we create the CIFS share, in this case the name is “/data/col1/veeam”.

and it can be shared via CIFS protocol. To create an MTree we login to our DD Appliance and go to “Data Management -> MTree”, there we click “Create” and the only thing we need to do is fill in the name of the MTree we want to make. You will want to make a note of your MTree name for when we create the CIFS share, in this case the name is “/data/col1/veeam”.

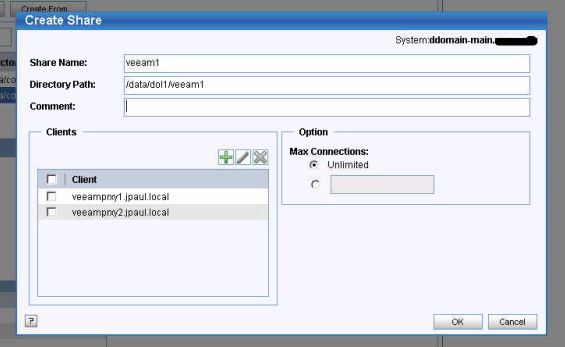

Step 2. Create the CIFS Share

After our MTree is created the next step is to share it via CIFS so that Veeam can see it, to do this we click on “CIFS” under Data Management, then select the “Shares” tab. After clicking create you will see a box that asks for the share name (can be whatever you want), and then the directory path… this is where you will paste in what you copied down earlier, it should look something like “/data/col1/MTREE_NAME_HERE”. Finally the last step is to tell it which hosts can access this share, normally by default no one can access a share unless you allow it. For Veeam we need to add ALL of our Veeam proxies that will directly connect to the share into the list, in this example I have added veeamprxy1 and veeamprxy2 which are my proxy host names. If you did not give your DD appliance a proper DNS server you will want to use IP addresses here instead of host names.

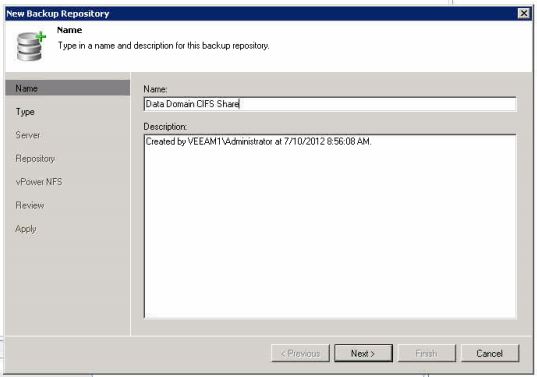

Step 3. Add a Repository in Veeam

The last thing to do is to tell Veeam about the new CIFS share; to do this we go back to Veeam’s Infrstructure section and click on Backup Repositories. From this area we can now add a new CIFS share from the share name that we created on the Data Domain, the only other information you will need is the IP address of your DD appliance which you should already know since you were accessing the web interface to set up the share ;).

3a. First name the new repository

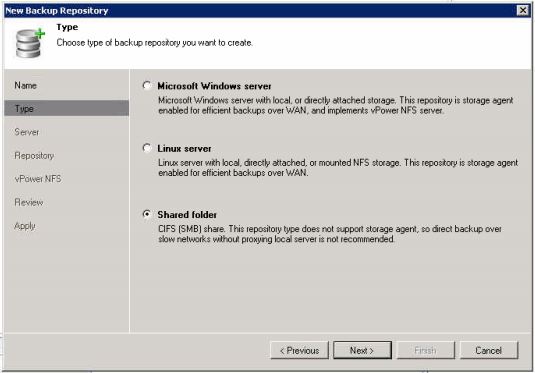

3b. Select CIFS as the repository type

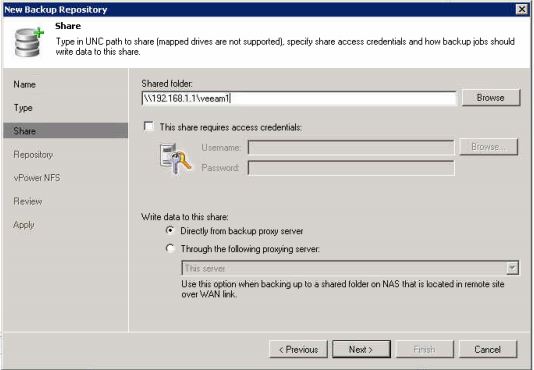

3c. Enter the UNC path to the CIFS share on the DD appliance

After that you can just click “Next” through the rest of the steps to complete the wizard and add the repository to Veeam. As a bit of troubleshooting on the page right after the one shown in the last screen shot (called “Repository”) you can click on the Populate button, if nothing shows up then you probably do not have the proper IP address in the dd appliance to allow this host to talk to the share. If it does populate though, then you should be good to go.

I almost forgot the “Why”…

Because Veeam uses session based dedup and compression we end up with a full backup each week (which is a good thing); the downside however is that we need a bunch of storage to keep many weeks of backups. When we add a Data Domain or other dedupe appliance to the mix we are able to deduplicate those full weekly backups and only store the unique blocks one time. So for any type of long-term retention a dedupe appliance is the way to go. Also because the Data Domain is able to further deduplicate and compress the data it makes it great for replication backup data offsite.

You might ask yourself “Why do I need to replicate backups offsite if I have Veeam replicating my VM’s to a DR site?”; which is a whole different post all in itself, but I would leave you with this question. If you have a disaster and lose your main site and your main Data Domain Appliance you would fire up your Veeam Replicated DR site VM’s and get back up and running, but what happens when the CFO or CEO comes to you and says I need these files from 2 weeks ago? That is where a second Data Domain at your DR site holding a copy of the last month or two of Veeam backups saves the day.

Links:

![]()

Good post, Justin. One note that on the repository you want to click the advanced option button and select the “decompress” and”align” options as well.

Justin, any idea what % of de-dup you are getting on Veeam backups? This is something we might look into.

Good day,

One thing to remember is that Data Domain is in-line dedupe (like the Dell DR4000.)

Other options – like Exagrid (who Veeam are partnered with), Falconstor, NetApp – are post-process dedupe.

I’d recommend checking out the pros and cons of in-line v post-process dedupe, and weighing these up against your use case, before making a decision.

Cheers!

VCosonok

PS Don’t get me wrong, Data Domain is an excellent product :-). This comment only serves to heighten awareness of other possibilities.

I have worked with Veeam and Exagrid before (and done several posts), the biggest thing i do not like about the exagrid and Veeam is because its post processed dedupe and has a “Landing Zone” it must use that landing zone when it creates synthetic full backups.

So if your exagrid is not 2x the size of a full backup, you can run into problems where veeam will get almost finished creating the full backup and Exagrid will tell Veeam that it is out of space (because the landing zone is full). Because it is holding all that old data from the last full backup that it had to rehydrate, as well as the data created for the new full backup.

I worked very closely with Exagrid when they initially released their support for veeam, so much so that they sent me 2 units to use in my lab for several months to do that testing.

Pingback: Domain data | Trusteesalesol

I would love to see Veeam build OST support into their product and then DD BOOST could be leveraged, along with other de-dupe HW appliances that support OST. Have you ever heard anything from the Veeam folks on this?

Ill ask but i havent heard anything like that in the Beta user forum

Can you give any reccomendations about the replication to the other data domain appliance?

What type of recommendation are you looking for ?

Our installation has 1 VNXe3100 (1 dpe, 1 dae) in our production environment, and 1 VNXe3100 (1 dpe) to serve as DR and possibly production for another location. Currently I’m struggling with coming up with an effective backup, replication, and DR strategy. What I have in place currently is sufficient but far from ideal. I currently backup with Veeam, and use SAN replication (setup in Unisphere) to replicate the backup datastore. I have the VNXe3100s in separate buildings but they are too close, the backup/DR VNXe3100 needs to go the DR location soon! The only reason they are so close is that the buildings are connected via 1 Gb fiber, when the backup/DR VNXe3100 goes to the DR location they will be connected via 10 Mb metro-e.

Currently I have 2 problems to solve:

1. Veeam is backing up to the production SAN and is chewing up a lot of space.

2. Replication, SAN replication brings the 10 Mb metro-e connection to a grinding halt, plus I like the idea of using Veeam to replicate VMs in a state where they could be instantly powered on.

Data domain and Avamar have been proposed but the testing I see using Data domain was very slow, no surebackup. And avamar is a rip/replace of Veeam – we’ve already invested in Veeam and Avamar needs iSCSI datastores to do quiescing.

I’m thinking about exagrid which would solve eating up production storage, and I think 1 exagrid in our primary location would suffice since the Backup/DR VNXe3100 has 12 2TB nl-sas drives – way more space than production. But the replication piece still has me stuck – I can replicate the VMs with Veeam, but I like the idea of having our backups replicated as well…

Any thoughts?

Exagrid is much more expensive than a Data Domain, and Veeam is a little trickier on Exagrid as well. Search for Exagrid on here, I have done articles about it… basically the landing pad needs to be 2x the size of your full backups.

When you said the Data Domain was slow, what do you define as slow ? Obviously Deduped storage is going to be slower then running to an uncompressed SAN, but that trade off is usually welcomed as long as you are saving space and bandwidth.

I have noticed that the DD160 is at about 100% CPU when getting over 80-90MB/s from Veeam, but a DD620is only about 30-50% when getting over 150MB/s from veeam utilizing multiple backup proxies and multiple Repositories running across multiple interfaces on the Data Domain.

if you want you can email me at [email protected] and we can talk more.

Replying to Josh Roundtree’s comment:

This is why you need to weigh up whether you want post-process or inline dedupe. The problem with DataDomain (inline) is that it goes straight to dedupe, so forget using instant recovery or surebackup because it takes an absolute age to re-hydrate; whereas with the Exagrid (post-process) your latest backups are still undeduped in the staging area, waiting for the dedupe cycle to kick-in.

Of course, a away to get around the above problem with the DataDomain is to backup to an undeduped location first – that is a staging area on another device – and then copy backups across to the DataDomain box – disk to disk to deduped-disk – but this adds an extra step into the process that you can avoid with the Exagrid.

Another advantage of the Exagrid is that your rate of ingest is not held up by the device having to calculate dedupe inline, it just dumps the data to the staging area and then runs its dedupe algorithms later.

Exagrid, FalconStor, NetApp, DataDomain, Dell DR4000, … , all do site-to-site replication of deduped backups (also many-to-one.)

Thanks for the perspective VCosonok, Justin said that he had some success using instant recovery and sure backup on the Data Domain, but it depended heavily on the workload.

The DD620 (most common one i work with) has no problems deduping data in-line without being the bottleneck. CPU usage maxes out around 60-75% while im flooding two 1gbps ports with data from multiple veeam proxies. Also I would disagree that surebackup is unusable. Obviously its not as good as a SAN, but its acceptable in most cases.

Also you should know that the Exagrid does clean that landing pad out pretty regularly. So you still run into problems running instant recovery from an Exagrid. After spending much time with exagrid engineers my understanding is that the Exagrid watches for extended periods of idle I/O time on its shares, when it see’s that it assumes the backup job is complete and it starts its dedupe process. So if your backup job finishes at say 2am… it will have that data in the deduped storage zone by the middle of the next day.

You can verify this by checking the green/orange bar on the webpage… it actively tries to keep the landing zone free… if it didnt it would be screwed the next night when your new data comes in. As it would have all that old data sitting there and be like…. shit we got more stuff coming in … hurry and dedupe the old stuff. (which in a way would make it an inline process as it would be deduping while new data was ingested)

So know that you know that think about how veeam works …. instant recovery uses whatever incremental you need as well as a full backup …. but wait that full backup is already deduped… so it has to undedupe it on the fly… which i might add takes up landing zone space…. so if you have to do a restore while your backups are running you had better hope that your landing zone is much much bigger then your backup size. Otherwise when the exagrid starts to hit its high water mark on the landing zone it will slow I/O hoping it can catch up… and eventually will just give up and start reporting full disk to veeam.

I have experiences this first hand.

Thanks for the point of view Justin, always good to hear real-world results!

For more information you will want to see the case study article I did here: http://jpaul.me/?p=3149

Hello all,

very interesting paper and discussion.

And what about veeam dedup ?

I’m currently reviewing vendor’s offers and 2 main

solution are offered to me :

one says : veeam dedup is great so you don’t need

a datadomain for storing vm backups, and proposed a netapp

FAS storage for this (that i could use to dedup

other backups for physical servers, made by another backup tool)

another vendor propose Datadomain

of course price is not the same, but what are the

pro/cons of each solution ?

thanks in advance for your view on this.