Background

“BOB’S BAIT AND TACKLE” was running VMware 4.1 on a mostly HP environment, their SAN was an HP EVA4400, and the VMware servers are HP Blades. For backup they were using HP Data Protector software along with an HP tape library, which was no longer able to meet their backup window requirements.

With a VMware upgrade on the horizon and with SAN space dwindling “BOB’S BAIT AND TACKLE” started looking for a replacement solution for their backup and storage environment.

The Problems

“BOB’S BAIT AND TACKLE”s existing infrastructure was limited to approximately 10TB of SAN storage (they store a lot of secret customer info or fish pictures I guess), and backups were limited to the speed of their tape autoloader and backup server. Because of this full backups of their Exchange environment required the entire weekend to run (time that could be spent on fishing), and other servers also took many hours to backup.

Their plans to migrate to a new ERP solution also had to be pushed back because of a lack of free storage on their HP EVA4400 SAN.

The Solution

The IT Team proposed to replace their storage environment with a new EMC VNX5300 fiber channel SAN and replace the tape library and HP Data Protector with a pair of Data Domain DD620’s and Veeam Backup software.

The VNX5300 would include over 45TB of raw capacity, which would give “BOB’S BAIT AND TACKLE” years of growth (and plenty of room to store fishing pictures). It also included advanced features such as Enterprise Flash Drives, aka EFD’s or SSD’s, and the EMC Fast Suite which allows for data to automatically move between tiers of disk for the best performance and cost per gigabyte.

The Data Domain DD620 boxes were chosen because “BOB’S BAIT AND TACKLE” wanted to reduce or eliminate the use of tape storage if possible. To facilitate the decommissioning of tape while maintaining an offsite backup, “BOB’S BAIT AND TACKLE” selected a second site across town which would be connected with dedicated fiber where they would locate their sister Data Domain appliance.

Veeam was chosen as the backup software for “BOB’S BAIT AND TACKLE” because almost all of their servers are now virtualized, and the handful of physical machines that remain are scheduled to be virtualized in the future (they went on a fishing trip and didn’t have time to finish I guess). Veeam also integrates tightly with VMware to provide quicker backups of virtual machines by leveraging VADP (or VMware API’s for Data Protection), and reduces the load placed on the virtual infrastructure as a whole compared to legacy backup applications that introduce agents into the guest operating system.

Results

After completing the upgrades at “BOB’S BAIT AND TACKLE” they are now able to backup not just one of the Exchange DAG members, but all of the servers helping to provide Exchange services including front end servers and MTA servers, all in less than 8 hours. Incremental backups have also been reduced to approximately 2 hours as well.

Instead of a single backup server and tape library Veeam and Data Domain are able to provide multiple paths for backup data to flow through as well. On the Veeam side we have implemented three Veeam proxy servers, and on the Data Domain side we have two CIFS shares and each is attached to its own gigabit Ethernet port. This allows many backup jobs to run in parallel while not affecting the performance of any one job like a single path would.

All of these technologies combined with an optimized configuration by the IT team have led to a shorter backup window and a much faster RPO and RTO. The solution also has the ability to add as much as 4 TB more to the Data Domain boxes to allow for an extended backup retention time in the future.

Real World Data

While most marketing documents show you averages and numbers that are sometimes questionable, this post will use real numbers taken directly from “BOB’S BAIT AND TACKLE”’s environment.

First let’s look at the RAW requirements, the amount of VMware data that Veeam is protecting is approximately 3.7TB. A full backup, after Veeam compresses and dedupes is 1.7TB. The graphs below show the first 30 days of activity for the Data Domain. In that time all Veeam backup VBK and VIB files account for 4.65TB of used space if we would be writing to a non-compressed or deduplicated device. However the actual amount of space used on the Data Domain device is 2.86TB, which is a savings of 1.79TB of space saved in just 30 days, which is a 1.7x reduction or about 40%!

A picture is worth 1000 words

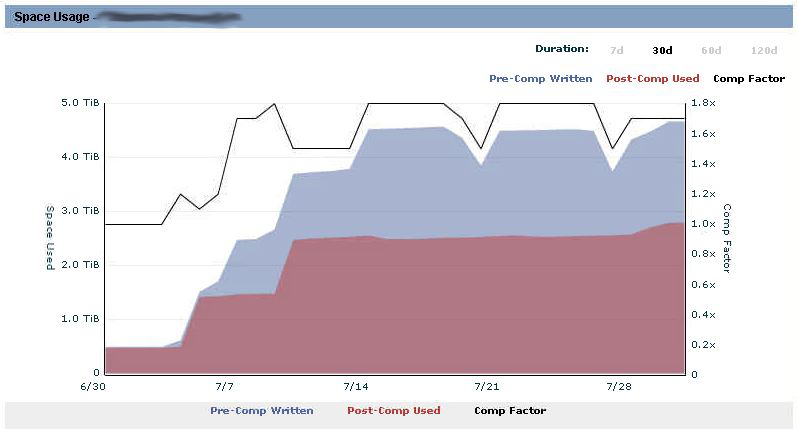

Figure 1: Space Usage

This graph shows three things: compression factor (noted by the back line), the amount of data that Veeam is sending the DD appliance (noted by the blue area), and the amount of data that is actually written to disk after the DD appliance deduplicates and compresses it (noted by the red area).

As you can see the first couple of days there is almost no benefit from deduplication or compression from the Data Domain side. We do start to see the red and blue areas start to separate about day number 7 when a second full backup is taken. Normally we would expect to see the space used grow slowly and not in a step type pattern, however because they started doing backups of virtual machines as they were migrated to the new SAN we see large jumps at the beginning part of the graph. So after the first week of migrations we started those backups, and then after week two’s migrations we started those backups, hence the step pattern.

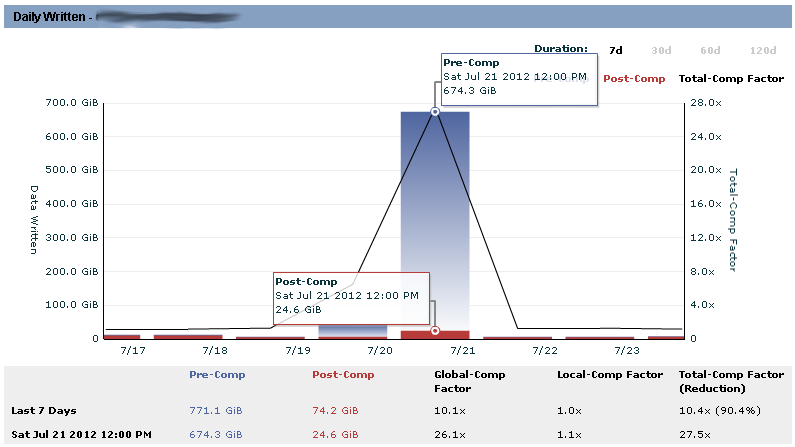

Figure 2: Daily Amount Written (7days)

This graph shows the total amount of data that is ingested by the Data Domain (denoted by the total height of the bar) as well as the amount written to disk(denoted by the red bar height). The height of the blue area in the bar is the amount of data that was ingested, but considered to be duplicates of data already on the appliance.

The interesting thing to note here is that the amount of data that Veeam sends to the DD appliance on a full backup day (Saturdays by default), and the amount of data that is actually written to the disk. In this example Veeam sent almost 675GB and it only consumed about 27GB on the Data Domain. This is where you see the savings of a dedupe appliance.

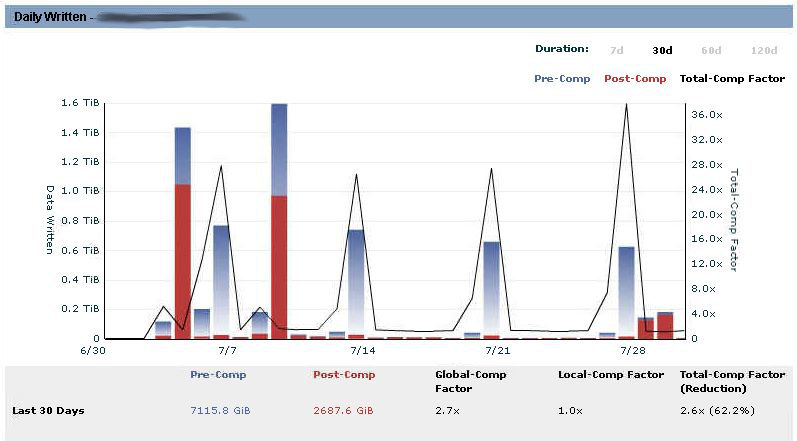

Figure 3: Daily Amount Written (30days)

This graph shows the same data as Figure 2; however, this is a 30-day view instead of a 7-day view. You can see that at the beginning of the backups we were ingesting large amounts of data (total bar height) and also writing a large amount to disk too (red area of the bar). This is because the Data Domain was seeing a lot of new data that it had not seen before; over the red bars get smaller and smaller while the blue bars stay the same or get larger. As a side note the last two samples on the right show a much larger red area than normal, this is because new machines were added to backup jobs and contained unique data, this is a one-time occurrence and by the next backup we will see much lower rates again.

Conclusion

The combination of Data Domain storage and Veeam Backup software is a near perfect combination for protecting VMware virtual machines. “BOB’S BAIT AND TACKLE” is now able to get good backups without having large backup windows; they are also able to replicate those backups offsite with minimal bandwidth usage and will eliminate the need for tapes once the remaining servers have been virtualized.

Overall “BOB’S BAIT AND TACKLE” should expect to see about an 8 week retention period with the Data Domain configuration today, but they could expect to see as much as a 60 week retention period if the existing appliances are upgraded to their maximum capacity of 12 drives.

Without the Data Domain, Veeam would require us to provide 7TB of storage to store 8 weeks of backups for this customer. If we were to upgrade the DD620 to the 12 drive configuration we could store 60 weeks…. And if we were trying to do that with normal storage it would require 48TB of disk space, instead of 8TB of Data Domain storage.

Price Comparison

To compare prices between a DD620 with the maximum drive configuration and a VNX5300 File Only SAN that has enough drive space to hold 48TB of Veeam data like we were mentioning earlier. Please Note that all pricing here is list price.

Data Domain with max drive configuration: $40,433 per site

VNX 5300 File only SAN with ~48TB usable: $74,227 per site

So the Data Domain is about $34,000 cheaper per site just for the hardware investment, Also the Data Domain is going to require 2u of space where as the VNX will require 11u, so if you have to co-locate your DR box at a datacenter there will be more cost there. Or if you are powering it yourself spinning 45 drives compared to 12 will definitely cost you more.

![]()

Cool information. Any numbers regarding restore?

Great article Justin, and a solid solution. Have you got any metrics on restore performance? I guess that’s why one backs up in the first place!

Cheers.

I will test this as soon as possible. Look for those results around August 10th or so.

From a cost perspective – how much would 48TB of Sata based Storage compare with the DD Solution ?

Just did a quick hunt and 48TB of NAS in 3u was about $13,000.

I will update the post with that information.

did you use the CIFS server on the DataDomain box or do you have some sort of proxy between the Veeam Proxy and the DataDomain?

Yes, Im using CIFS… i seen where one of the setup guides said that Veeam recommends a linux box so that you can do NFS from DD to linux and use the linux box as a target, but i dont think many people will put that extra step in there.

Hi Justin, thanks for the information on Veeam and Datadomain! This is really helpful. We use both as well. I was wondering, what configuration options do you specify for the backups jobs? I remember reading somewhere that with Datadomain it’s generally best to use forward incrementals with a period active full, as well as disable Veeam inline dedupe and compression.

That’s how we have things set up at the moment, but I just started testing Surebackup and it’s terribly slow. Was wondering if adjusting these settings might help at all. Have you tried variations to determine what works best for you?

Thanks,

-Loren

Hey Loren, thanks for reading!

You are correct on the Veeam job settings, that is exactly how I configure jobs as well.

What model of DD do you guys have ? One of the drawbacks of using dedupe appliances is slower re-hydration speeds. Let me know what kind of box you have as well as how many VM’s are in your surebackup test.

I’ve been testing the settings with an old DD610. We have DD630s, 670s, and 690s in production environments. Still on Veeam 6.1, though starting to test 6.5. I was looking around for others’ thoughts today and saw the post below from Tom Sightler, mentioning that enabling Veeam dedupe and/or compression may actually improve Veeam restores and surebackup speeds.

https://forums.veeam.com/viewtopic.php?f=2&t=8916&sid=ad8a736c74ee77d4beee78101d319ac1&start=45#p64735

Loren ,

The problem is that a Data domain isn’t really designed for Random Read IO, which is what you do when trying to run a VM directly from the backup. All of the Read ahead caching that the DD tries to do isn’t going to help unless you have a sequential datastream. In that respect , a conventional restore should run a little smoother. If you use Veeam Dedupe , which is at quite a large block size compared with the DD , along with our dedupe-friendly compression setting ( called “low” in 6.1 ) then there is a little less data for the DD to work with, more is done at the veeam proxy end. Of course the reported deduplication rates from the DD will be lower , but its working with more optimized data. It depends if your end goal is to report the highest possible dedupe rate from the DD, or to get the best backup/restore performance 😉

Chris,

Can you elaborate a little more? ALL of your manuals say turn off all the Veeam compression and Dedupe if using any dedupe appliance…. lets say that i don’t care about the advertised ratio of the dedupe appliance… what are the best settings to use in terms of restore performance and surebackup?

I’m with Justin. My goal is the best possible *restore* performance. What settings would maximize that?

Thanks,

-Loren

Using a little Veeam Dedupe combined with the Low compression setting moves some of the load to the agent where vpower is running from. Getting that agent to run as fast as possible is a matter of CPU & RAM , the more RAM the better. I would encourage you not to take my view ( or any guide ) as gospel, and try it for your self in your environment .

I wish I had such time. I have over a dozen environments to support and slightly different hardware at each of them, and testing all the combinations of Veeam options and job types would result in many dozens of tests. I’d need to dedicate several months get every environment so optimized. That just isn’t possible. And backup engineer/administrator is an additional duty for me, rather than a dedicated gig. Rules of thumb are needed.

Anyway, I’ll do what I can in our lab to try to understand the impact of the various options more thoroughly. I have 8 jobs configured now. 2 with dedupe+low compression+local target, 2 with dedupe+no compression+local target, 2 with dedupe+low compression+LAN target, and 2 with dedupe+no compressions+LAN target. For each, the repository is a CIFS share on a DD610.

I’ll let that run for the next week to get some good samples. The one thing I can confirm right now is that it is no joke that the dedupe option will slow down incremental backups. Active fulls are rougly the same, but forward incrementals are 1/3 as fast as they were without dedupe. Reason seems to be that enabling dedupe results in significant reads on the disks, at least according to the DD stats interface. Haven’t tested restores yet, but performance improvements there would be worth sacrificing backup performance.

We did just get a DD630 in the lab, as well, so I’ll duplicate the tests there to see if the results are consistent or just a factor of bottlenecking on the DD610. And I’m setting up a Linux VM to use as a repository to test another recommendation to mount the DD via NFS rather than use CIFS.

Enabling our dedupe shouldn’t result in those level of reads. Reverse incrementals , sure but not dedupe. We hold the hash table in RAM on the proxy acting as the repository agent for CIFS, when a duplicate hashed block is seen we should just write a pointer. I will ask around today ( am at out kick off in Russia this week ) and confirm.

Very interested in the results of the tests here! Looking at introducing a couple of DD 620s into a Veeam environment and would like to see what gets the best results.

The DD610 was so slow, regardless of the selected backup job settings, that I gave up on using it. I moved everything over to the new DD630. At first, when there was only 1 VM backing up to it, it was very promising. But now, at 40% utilization, it’s not sufficiently faster than the DD610.

When de-dupe and compression are disabled, it takes over 20 minutes for a VM to power on. That’s too long for for many services, including the VMware Tools and networking services, so Surebackup jobs never realize the VM is running. It also takes over 10 minutes just to login to the VM.

Enabling de-dupe and compression does help, but not enough. It takes roughly 12 minutes for the VM to power on, which still isn’t fast enough for those services to start properly, so Surebackup still fails. However, it only took a couple minutes to login to the VM, and it was somewhat usable at that point. Also, enabling these options does have a significant impact on backup performance, as well, with incremental backup processing speed reduced between 33-66% on the DD630. That’s something that will have to be considered if deciding to implement de-dupe and compression to improve Instant Restore and Surebackup performance.

Thanks for sharing Loren!

I did a little more testing, and it’s primarily the Veeam de-dupe option that drastically reduces the backup speed to the DataDomain. Just enabling compression does not impact backup speed to nearly the same degree. Processing speed without dedupe: 230MB/s. With dedupe: 30MB/s. The difference is rather frightening.

I’m not sure if compression alone will significantly improve restore times…I’ve disabled de-dupe on all my backups jobs and am now testing combinations of compression levels and block sizes. I’ll let that run for a week or so and then attempt some IR and Surebackup jobs and then report back.

Is that the speeds as reported by Veeam or by the DD? With our Dedupe , there is some I/O that will go to the back end to read in the has table , but this should only be a max of 1.5GB or so. Thanks for sharing the results of your testing , it does seem to align a little with some other work I’ve seen in terms of all out write speed to the DD.

Moving forward , have you considered a hybrid solution ? I’ve customers who are currently deploying a Local DAS tier ( sized a little larger than a full backup ) to hold the last backup+1 for surebackup / IVMR , with the Datadomain / Dedupe solution utilized for longer term solutions.

Those are the Veeam-reported processing speeds. I know that includes a lot of overhead processing and is not actual network throughput, but I was hoping that it would be an apples-to-apples comparison.

I am considering a hybrid solution now. 🙂 Though I’d like to see better support for it built into the Veeam jobs and scheduler. That’s the basis for my recent comment on the Son, Father, Grandfather thread in the Veeam forums.

http://forums.veeam.com/viewtopic.php?f=2&t=9300&p=73414&hilit=grandfather#p73414

i totally agree veeam needs to build in an “archive to” destination and a setting that allows you to tell it how many restore points to keep on tier1 before offloading to tier 2

When using Veeam with DD’s, do people typically create multiple repositories, or just use one for the entire DD?

Hi Justin,

Do you backup directly onto the Data Domain? or do you use the storage on the VNX as a staging/short term backup area and then archive onto the Data Domain?

If it is the former, how is the performance during restores and synthetic full backups as these are very IO intensive activities which would also be affected by the dedupe rehydration delay.

If it is the latter, can you share how you configured this in Veeam? Is it through a combination of backup jobs and backup COPY jobs (thru the GFS features in Veeam 7)?

Thanks for any insight.

In the past I have just pointed the jobs directly to the DD. And in some cases I will tell it to NOT do a synthetic full, and just let it do regular full backups since the Data domain will dedupe them. In fact if i recall that is how the best practice guide tells you to do it (dedupe appliance vendors what you sending as much data as possible…. otherwise their numbers look bad 🙂

Anyhow Let me look at the way you suggest doing it with the file copy and ill see how it works, I have honestly not messed around too much with v7

“Yes, Im using CIFS… i seen where one of the setup guides said that Veeam recommends a linux box so that you can do NFS from DD to linux and use the linux box as a target, but i dont think many people will put that extra step in there.”

Justin, we actually *had* to move from CIFS to NFS due to the extremely poor network performance of CIFS. CIFS was getting us about 150-200 Mbps on 1 Gbps ethernet if we were lucky. Initially I thought DD was a dog, however, after building out an Ubuntu NFS broker and configuring it per EMC documentation we are now getting full 1 Gbps saturation! It’s truly worth the small effort. A word of caution though: if the NFS broker is up but DD is down and Veeam kicks off a job you’ll be in trouble as the Veeam job will write directly to the NFS broker mount point directory rather than brokering to the DD box.

“… if the NFS broker is up but DD is down and Veeam kicks off a job you’ll be in trouble as the Veeam job will write directly to the NFS broker mount point directory rather than brokering to the DD box.”

Regarding the Linux NFS mount point, a solution I found is to change attributes on your NFS mount directory after you create it, but before it’s mounted:

Example: using /mnt/dd/nfsbk as the Linux NFS broker’s mount point to DataDomain NFS Share:

mkdir /mnt/dd/nfsbk

chattr +i /mnt/dd/nfsbk

This will prevent Veeam from writing to the NFS repository in the event DataDomain is down and unmounted from the NFS broker. Veeam jobs will error out rather than writing to the local unmounted directory.