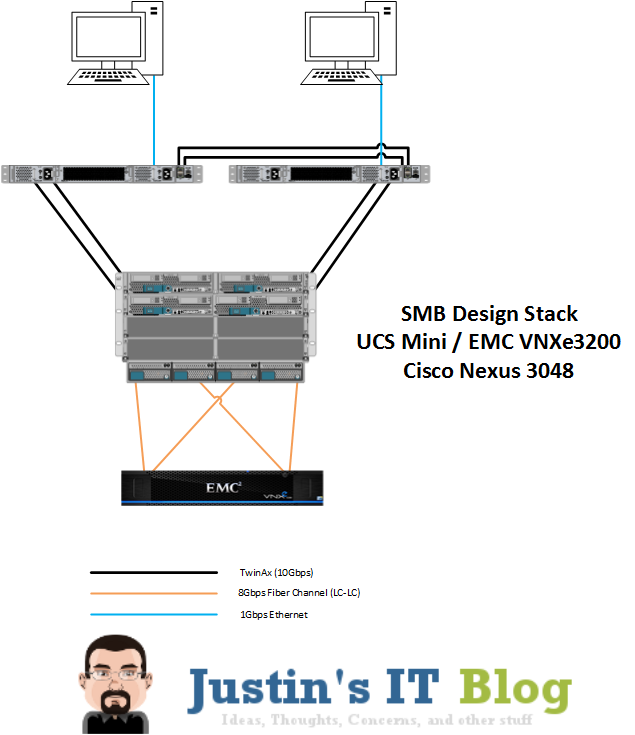

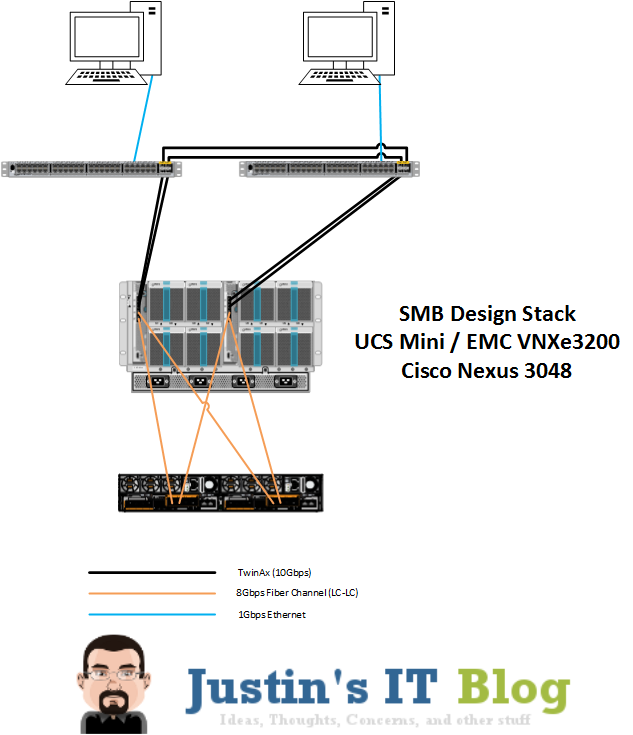

Update: In an attempt to get this post up quickly, I totally missed that I had the UCS chassis connected to the Nexus switches incorrectly, so I updated the design with the proper wiring as well as switched the stencil of the VNXe out for the proper one. Also if you click on the design image it will link you to the new PDF that contains both the front and rear view of the design. I’ve done a few “SMB” Architecture posts before most however were using regular rack mount servers, and 1Gbps networking. However, now that the UCS Mini Chassis is order-able I thought we better take another look at SMB design and see what we could do to incorporate it. For more information on what the UCS Mini is check out my post on that here.

Whats the goal?

So the purpose of this post is simple… design an SMB friendly architecture that won’t break the bank, but that will also minimize bottlenecks all the way to the access layer. Many of the components in this design can be swapped out for other vendors, with the exception of the UCS System. The SAN, Ethernet Switching, and (god forbid…. ) the hypervisor; could all be exchanged for something else.

What will it look like?

A picture is worth 1000 words:

What Hardware are you using?

OK well lets start at the top and work our way down, along the way I will point out what options you have as well. 1.) Ethernet Switches – 2- Cisco Nexus 3048 Switches – These switches provide 48 ports of 1Gbps copper connections, they also have 4 SFP+ ports which will allow us to link into the UCS Mini with 2 – 10Gbps TwinAx cables on each “side”. This meets the requirement of providing 10Gbps connectivity from servers to access layer, however if you need POE for phones you will be out of luck with the Nexus 3048’s. When POE required I would recommend the Cisco 3850 series of switches, just don’t forget the 2 port 10Gbps module for each of them. Alternatively if you are not a Cisco switch fan you could swap out the switches for anything with at least 4 10Gbps ports PER SWITCH(2 for uplinks between the switches unless a backplane is available, and 2 for downlinks to the UCS Mini) 2.) Compute – Cisco UCS with 6324 Fabric Interconnects (aka UCS Mini) – This is the heart of the design, it allows us to scale from 1 to 8 blades while integrating the Fabric Interconnects into the chassis itself. This is also the only part of the solution that I wouldn’t swap out. Inside of 5108 chassis we have room for 8 half wide blades or 4 full wide blades, and on the back we have what used to be the IO Module slots. However what makes the UCS Mini the “mini” is that they took the technology in the 6200 series Fabric Interconnects and shrank it down to the size of an IO Module. The only drawback is that you can only have one chassis with this design… but for our SMB design, that isn’t a problem. So out of the 6324 Fabric Interconnect Modules we have 5 connections. 1 for out-of-band management (think UCS Manager) and 4 SFP+ ports. Two of the SFP+ ports we will cable northbound to the Nexus 3048’s, these connections will provide connectivity to the access layer. The other two SFP+ ports will get Fiber Channel SFP’s installed and connect southbound to the SAN storage unit. 3.) Storage – EMC VNXe3200 – You’re going to hear me talk a lot about this box in the next 8 months for many different reasons. Mainly because EMC sent me a VNXe3200 to mess with in my lab, but also because just reading the spec sheet on this thing leads me to believe that it is the box that SMB’s have been waiting for. To keep it short… think VNX (or hell even VMAX) features, at an affordable price point. By default it comes with 1/10Gbps ethernet connectivity, but for this project we will add in the Fiber Channel Module. Your probably thinking “Why Fiber Channel if 10Gig is already in the box?” well … I asked myself that too. The answer… 10Gbps Copper SFP+ modules don’t exist (at least I couldn’t find any). So we have few options: 1.) use Fiber Channel; 2.) Put our storage on 1Gbps ports; 3.) switch to a non Cisco Switch that has 10Gbps UTP ports. The easiest decision was to just go with Fiber Channel, and pay the extra cash for the module in the SAN.

So what does it cost?

Well as with all things it depends on how you configure it. But lets take a high level look, and while we do that… keep one thing in mind… NO ONE pays list price for Cisco gear (however if you would like to… please contact me ASAP as I’m sure one of the sales guys I work with would love to meet you)

Cisco Networking:

Nexus 3048 Switching was around $26000 list price for the pair

Cisco Compute:

UCS Mini Bundles start out at about $39K for a two-blade bundle, and about $59k for a 4 blade bundle. The bundle includes everything from the chassis to the blades to the Fabric Interconnects. Depending on the bundle you go with you will start out with 64GB of RAM in the two-blade bundle, and the four blade bundle comes with 128GB ram per blade.

EMC Storage:

Lastly the storage portion. Again this really depends on how things are configured. If you want to see the bundles head over to the VNXe store. Entry level list price says $11,500… however for a “real world” BOM … most of the ones I have been working on are around $20-50k list price (depending on the amount of storage and other options).

Other thoughts

So while this isn’t going to be for the mom and pop SMB, it certainly is a great option for the more “M” sized ones. Personally I wouldn’t look to use UCS Mini until you need at least 3-4 blades, or if you just REALLY REALLY want 10 Gig. Overall this solution is going to work fantastic for a business that needs their small cluster of VM’s to be lightning fast for both compete and data access. Once I get the VNXe3200 up and running in my lab I will update this post with a link to what sort of speeds I am able to get out of the VNXe. Same goes for the UCS Mini once I get some hands on time with it.

![]()

Justin what do you recommend for a ucs mini setup with NFS using a FAS2554 and 2 9396px nexus switches? Do direct connect or attach the NAS to the upstream nexus switches? Also what would you recommend for qos/vnics for 4 b200’s with the VIC1240’s. Create 4 vnics and do NIOC in vsphere?

i would attach the FAS to the Nexus. if you had slow switches upstream i would connect to FI’s but you will have plenty of BW from switch to Chassis.

Do your N9K’s have the 40gig modules ? If so then i would attach with 40gig, if not then id attach with all 4 – 10gig ports to N9K then from the N9K go to the FAS.

as far as nics i would probably set it up as you described, 2 for traffic and 4 for storage.

Yes we have the 40gig modules. So you recommend the following

-two 40gig links between the N9Ks in a vPC

-two 10gig links from each FAS head to the two N9K’s in a vPC

-four 10gig links from each 6324 FI to the N9K’s?

hello

in my environment have a fiber channel storage 8GB, it is necessary I put on my blade esxi the board VIC 1340/1240 and Cisco, QLogic, Emulex or I / O adapter to 2204XP. to connect directly in fabric UCS 6324?

hello Justin, why do you have both network uplink ports of FI A linked to the same switch? shouldn’t they be split to the 2 northbound switches? Same with FI B.

it depends.

Fabric Interconnects are best utilized if you can put the ports into a port channel. Which, if you have cisco switches, then yes one to each because you could do a VPC or a Multichassis etherchannel. However if you have other switches that cannot do VPC then you are better off to do all Ethernet from one FI to one switch and create an etherchannel there… and same for the other side…. if you lose a switch your ethernet traffic will just failover to the other switch (either via hardware failover or via vmware failover).

If we decide to use iSCSI using the design above, can we just attached the VNX to the 2 x N9372PX switches using 2x 10GB links.

That way other servers or another UCS mini can take advantage of iSCSI storage vlan too.

yes a VNX can attach to the Nexus 9k’s (as far as i know…. i know they have some FCoE issues but as far as straight ethernet doing iSCSI … should be fine)

Pingback: Introducing the Cisco UCS Mini - Justin's IT Blog | Justin's IT Blog