Recently I was given the chance to install an HP P2000 / MSA2000i iSCSI SAN for a customer. This blog post details setup best practices for using the MSA2000i (MSA2324i technically) with VMware vSphere 4.1. But first an overview of the environment: 1 – DL360 G6 Server with two more servers to come, all with dual Xeon E5540 procs, 24 GB of ram, and eight 1Gbps NIC’s. The goal of the project was to virtualize the company’s servers as well as provide better RTO and RPO for existing servers and a new ERP system. Besides the new hardware we are also leveraging a Cisco 3560G Switch for the network side. This is a temporary solution, as this single switch will be replaced with 2 – Cisco 3750G’s, and will be configured in a stack to eliminate the single point of failure which the 3560G presents.

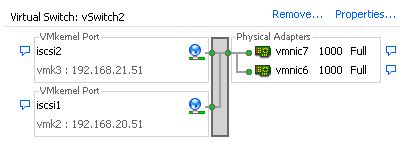

In order to do multipathing properly we will need two SAN subnets for iSCSI data traffic. Inside of each subnet we will have two SAN ports and 1 port from each ESXi server. We need to set it up this way so that there are multiple source and destination addresses to maximize the number of paths between SAN and server, which in turn will allow for redundancy as well as the ability to leverage multiple gigabit links in tandem. I wont go into much detail for the servers, but would recommend using the HP P4000 guide, which also describes multipathing to the P4000. Ignore the parts about the SAN in this case and just focus on the setup of VMware ESXi iSCSI initiator setup. When you get done with setting up your servers according to that guide you should have something like what is in the following pictures:

The first screen shot shows the vSwitch configuration – two physical NICs and two vmKernel ports. The second screen shot shows one of the vmKernel ports, and how one of the NIC’s must be placed in unused mode, and the other in active mode. The setup for the other vmKernel port is just the opposite of this one (so vmnic6 is active while vmnic7 is unused). This basically makes an iSCSI port through a physical NIC, so that each nic has its own IP address. There is also some configration that must be done to map these vmKernel ports to the iSCSI initiator… see the HP guide for that.

Now the new part: Hp MSA2000i SAN.

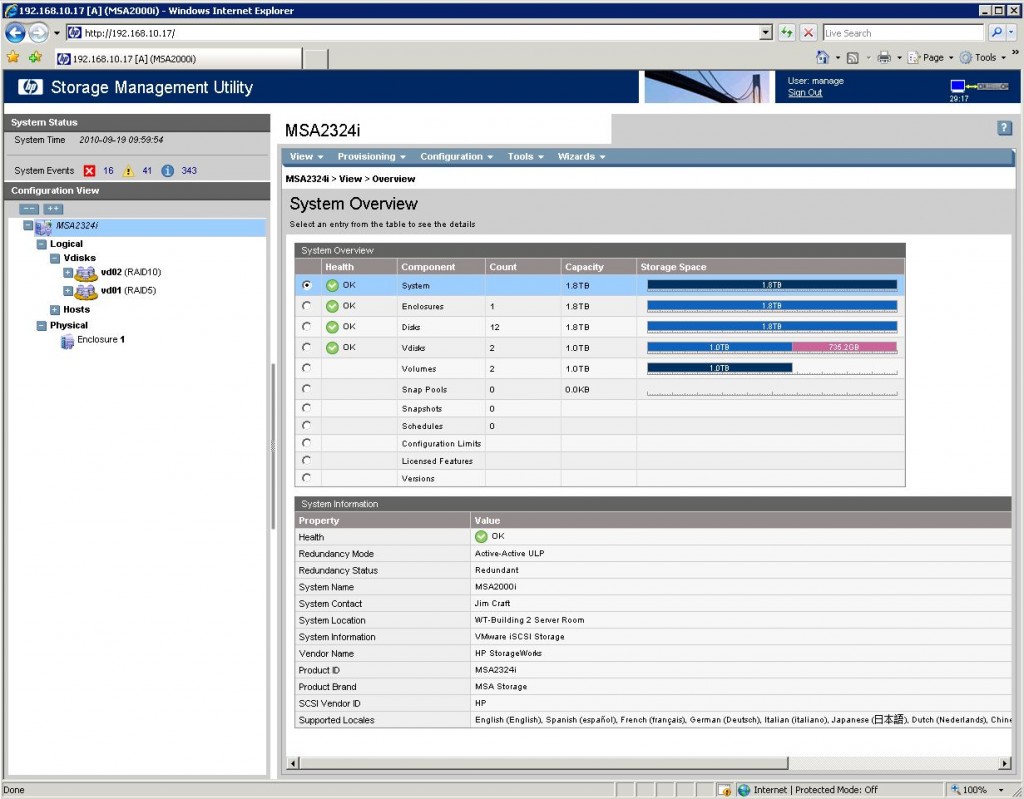

Configuration of this SAN can be done completely from a web browser, making it very simple for the SMB market to work with. It comes with a CD that will help discover it the first time you plug it in, however as long as you have DHCP turned on it will go out and get an IP and then you just need to look through your DHCP leases to see which ones just get handed out. After logging into the interface you are presented with an easy to understand interface:

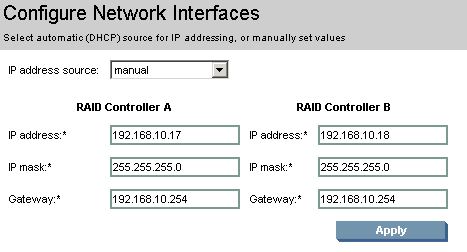

Basically this interface shows you your Vdisks (think of these as RAID groups) and under those are your actual volumes that get presented to your initiators. From the main page you can manage the configuration of the MSA controllers but clicking the configration tab and going to System settings -> Network Interfaces. This is where we will set our static IP addresses for each controller. These IP addresses are to be allocated from a management VLAN, or if you dont use a management VLAN then use your normal data VLAN. This MSA has redundant controllers so we will need to addresses, one for each controller.

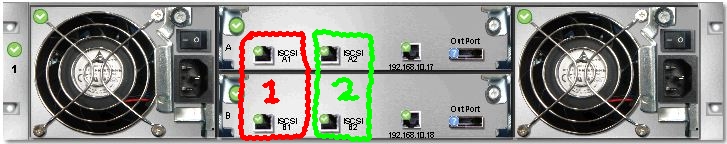

Before I get into any more configration of the SAN I think it would be good to know exactly how the back of the MSA looks so that its easier to understand. I have also used my amazing GIMP skills to add some boxes with the number 1 and 2 in them. These are the subnets that the ports belong to, so the two ports in group 1 are in teh first SAN subnet, and the two in group 2 are in the second subnet.

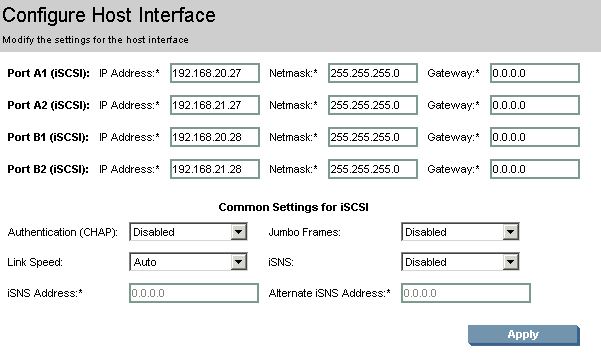

So what the “Configure Network Interfaces” page did was to configure the ports on the back that are just to the right of the green box, these are the management interfaces. Now for the iSCSI interfaces, to configure these you goto the same configuration menu, and drill down into system, and then pick “Configure Host Interfaces”… yeah its a little confusing at first… I would have probably labeled them “Configure ISCSI Target Interfaces” or something like that.. oh well. Once you click that you are given a screen with four sets of IP addresses. What we need to do here is ensure that each controller has one port in each subnet, so (for simplicity) ports A1 and B1 are a subnet and A2 and B2 are in the other. Here is what it looks like when its completed.

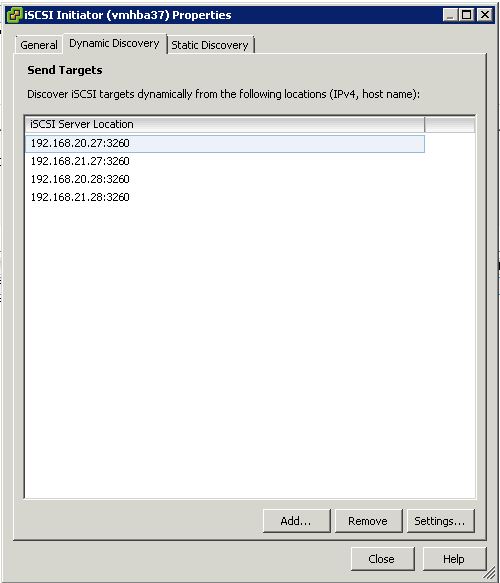

We used 192.168.20.0/24 and 192.168.21.0/24 as our two subnets for iSCSI. I did not configure a gateway on these ports because there should not be any reason for these ports to access anything outside of our iSCSI VLAN. Once that is all setup and your volumes have been mapped to a controller you should now be able to enter the MSA’s host interfaces into the ESXi Software initiator and rescanthe HBA. Make sure to enter ALL of the MSA’s IP addresses, unlike the lefthand where you just need one, you will need multiple addresses for the multipathing to work on the MSA.

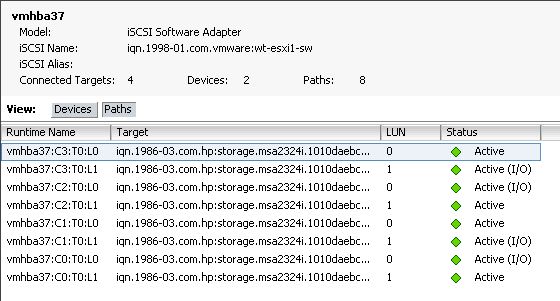

After rescanning the HBA we should have a bunch of paths to our volumes.

Even after configuring Round robin multipathing not all of the paths will be “Active (I/O)” this is because of how the MSA operates. Basically when you create a volume you map that volume to a controller, and that is the active controller that services I/O to the volume. For fail-over purposes the other controller assumes a passive state for that volume, if the active controller fails then the passive controller assumes control of I/O for the other volumes. What is nice about the MSA2000, along with other storage solutions, is that volumes can be mapped to either one of the controllers for its primary I/O. This allows the MSA to take advantage of CPU and network resources in both controllers during normal operation, which maximizes the performance of the SAN. Obviously if a controller fails then throughput maybe a little slower while the other controller is offline.

Overall setup of the MSA took maybe 2 hours from the time it was pulled out of the box until volumes were ready to be presented to the VMware cluster. Array initialization did take much longer for the RAID5 and RAID10 volumes that I configured. The price point of the MSA2000i series is very reasonable for the SMB market, the 12 drive LFF model with dual controllers has an MSRP of $7800, while the 24 drive SFF model with dual controllers is $8100. The only thing that this does not include is drives. Obviously this is where you can make the price very high or stay reasonable. However you can mix and match drives inside of each chassis. If you have database servers or MS Exchange you can put some SAS drives in and create a high end Datastore, while for file servers you could add in a few terabytes of SATA drives for higher storage capacity. Plus it can grow with your business by simply adding an MSA70 shelf to the mix. on the SFF models you can add 3 more shelfs for a total of 99 drives, and on the LFF models you can add 4 more shelves for a total of 60 drives.

Combined an MSA2000i with the VMWare Essentials Plus package, the Veeam Essentials for VMware bundle, a Cisco switch, and three HP dual six-core servers with a bunch of RAM and you will have no problem running almost any SMB workload at a very reasonable price.

Shopping list for MSA2000i SAN solution used in this article:

- HP MSA2324i SAN with Dual Controllers and 3 Year Warranty – $8,100

- 12 x 146GB 15k RPM Hard Drives – $5268

![]()

Why do you have multiple subnets for the ESX host NICs and the MSA NICs? The multi-vendor post (http://virtualgeek.typepad.com/virtual_geek/2009/09/a-multivendor-post-on-using-iscsi-with-vmware-vsphere.html) on iSCSI with vSphere makes no mention of splitting the NICs over multiple subnets..

Thanks for the comment Chris! Check out HP’s Best practices guide for the MSA here Page 42 has a great picture, however it really doesnt go into “why” so… We need the ports in different subnets because all of the cables in the first subnet can be ran to one switch, while the other set can be ran to a different switch. Same with the ESX servers, all the iscsi ports in subnet A go to switch A while all ports in subnet B go to switch B…. this will create two completely redundant paths and leave no single point of failure. So in the end the reason that you need two subnets is because switch A and switch B are not connected to each other and because of that if all the SAN ports were in the same subnet (as were the esxi ports) we would run into situations where a port on the esxi server would try to access a san port that is cabled to the other switch. And because the switches do not have a path between then it would timeout and cause all sorts of problems. Let me know if you have more questions or anything, would be happy to help out more or explain in more detail.

Thanks! That makes more sense and it’s nice to have the “best practices” PDF — not sure why I couldn’t find this on my own at HP… (or why my installation vendor couldn’t find it and follow the instructions in it!!!)

Hey Justin, Just found your blog and great into on the HP p2000 iscsi san. We just had one delivered to my location the other day loaded up with 12 2tb sas disks for a total of 24tb of storage. I am going to be working with a consultant shortly on setting up the disks and partitioning it but I would like your thoughts on best way to carve up that storage. The HP p2000 will be serving two HP servers running vmware esxi 4. Each server has 8 gigabit nics.

Anyway great blog and I am very glad I stumbled onto it.

Much simpler to follow, and makes much more sense than the HP guide, nice one 🙂

Hey do you know if the MSA2000i or P2000G3 require HP original hard drives like the Dell MD3000i does? MD3000i doesn’t take third party HDDs, and Dell’s markup on HDDs make NexentaStor look pretty awesome. So anyone tried Hitachi or Seagate Nearline SAS in those SANs?

I could answer your question a little better offline, but since you didnt provide a real email address ill go my best here.

The MSA and P2000 are OEM’d from a 3rd aprty manufacturer. SAS drive from HP do come with Hp firmware on them, but as from what i understand SATA drives are just stock firmware.

The catch is drive trays. The LFF Model SAN uses an odd tray which isnt that standard proliant 3.5″ drive sled.

The simple answer is that it will in deep take about any sas/sata connector drive that you have.

Hi Justin –

I used your site extensively when setting up our SAN a while back. One question I have is that on our 2 controller iSCSI setup, I have both management ports setup on the same subnet (different IP’s of course) and connected to my LAN. After a period of time, I get the SSL error:

“ssl_error_bad_mac_alert” if I try connecting via web browser. If I go and disconnect, say the 2nd port, I’ll be able to log in. Have you seen this?

Hi Justin-

Just wondering if you have tried this at all in vSphere 5.0? I had a design very similar to yours and then I came across this KB from VMware that seems to invalidate it all.

http://kb.vmware.com/selfservice/microsites/search.do?cmd=displayKC&docType=kc&docTypeID=DT_KB_1_1&externalId=2038869

What are your thoughts?

Thanks,

Scott

Hi Justin-

Great post! I’m in the process of installing a P2000 SAN in my environment and I have a design almost the same as your’s. However, I came across this VMware KB that seems to invalidate your and my designs. What are your thoughts on this? I’m running vSphere 5.0 and the P2000 G3 iSCSI dual controller SAN.

Thanks,

Scott

http://kb.vmware.com/selfservice/microsites/search.do?cmd=displayKC&docType=kc&docTypeID=DT_KB_1_1&externalId=2038869

I’m wondering the same thing. I’ve got a P2000 on its way to my office and am wondering if I should go with/without the port binding.. In the past, I have setup an EMC VNXe SAN in the exact same configuration as described above, but this VMWare article suggests it’s a bad idea.

Thoughts?

-Jeff

I would have to look into that article more…. that is how i have always configured things…. its how i was running the P2000 systems and its how im running my VNXe3100 as well… i have had no issues.

Hey there. nice guide. in the process pretty much the same on a 2012i?

yup

Thanks… My web management looks way different than yours.. I am bit confused how whoever set this up did it.. for example, we only have one ESX host and only one controller in the MSA. One of the iSCSI ports is going direct to ESX host.. where it get lost is the other iSCSI port is just going back to a network switch for whatever reason.. also, like I said the MSA only has one controller and two RAID5 volumes.. what is weird is the second RAID5 volume shows being on “RAID Controller B” which I assume refers to the secondary slot but that is currently empty.. lol

Hi Justin and Jeff-

Any thoughts or ideas on that article mentioned above? I opened a call with VMware, but they are still trying to figure it out.

http://kb.vmware.com/selfservice/microsites/search.do?cmd=displayKC&docType=kc&docTypeID=DT_KB_1_1&externalId=2038869

Thanks,

Scott

I am setting up an MSA2000 iscsi, connected to a blade enclosure. I am trying to figure out how to make a shared volume that any computer on our domain can access, but there doesnt seem to be a way to do this?

what you are talking about is a “file” share, the MSA2000 iSCSI is a block sharing device. In order to share that storage you will need to present the block device to a server, and then use software on the server to publish it to other computers. If you had something like a Netapp Filer, or EMC VNX you could present the storage directly.

thanks much.

Hi Steve.

How is it possible to just allow one server to manage this share?, whenever I log unto any of my server 2008 server that has ISCSI initiator started its shows up in my Computer. I dont want that happening.

Thanks In Advanced.

Oh Steve i have the MSA2000 iSCSI.

Terry.

Hi Justin,

We use the SAS variant extensively at our clients sites, but i have been reading up on the remote snap functionality of the iSCSI variants.

Do you have any experience with remote snap replication? If so, does it work reliably with VMware vSphere?

Have you ever used the 10Gbit model, as IMHO the 1Gbit iSCSI variant is not an option if you’re used to the 6Bit SAS version?

Thank,

Dennes

Thanks Justin.

This was exactly what I was looking for. I just got my P2000 and am dying to get it going and finally have shared storage for our VMWare environment.

Len

How can one change the ssh port and htttps port of the storage,