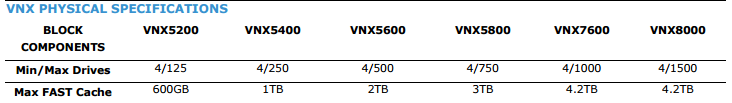

UPDATE: I am starting to update this page with VNX2 (Code Name Rockies) Information. I have only uploaded the components sheet so far, but when I get time to do the others I will update them as well.

I find that many times when I’m talking to customers they are not sure what all makes up a VNX array, and this can complicate troubleshooting over the phone as well as cause confusion on both sides. To help clarify things I have created a series of quick reference sheets showing the components and what they are called. I have also created a couple of sheets that show what cables are involved in the VNX system and where they go. Stay tuned for VNXe sheets.

For the official VNX Spec sheet head over to EMC’s website.

Quick Reference Sheets

| Thumbnail | Title | PDF Link | PNG Link |

|

VNX 5100/5300/5500 Components | PNG | |

|

VNX 5200/5400/5600/5800/7600 Components | PNG | |

|

VNX 5100/5300/5500 SAS and Power Cables

I also did a quick how to video for doing these cables |

PNG | |

|

VNX 5300/5500 Unified/File Cables | PNG | |

|

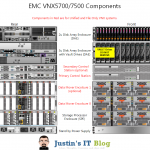

VNX 5700/7500 Components (also the VNX 8000 uses the same general layout) |

PNG | |

|

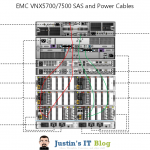

VNX 5700/7500 SAS and Power Cables | PNG | |

|

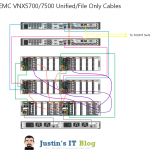

VNX 5700/7500 Unified/File Cables | PNG |

Components and their purpose

Standby Power Supply – SPS – This is a 1u uninterruptible power supply designed to hold the Storage processors during a power failure for long enough to write any data in volatile memory to disk.

Disk Processor Enclosure – DPE (VNX5100/5300/5500/5200/5400/5600/5800/7600 models) – This is the enclosure that contains the storage processors as well as the Vault Drives and a few other drives. It contains all connections related to block level storage protocols including Fiber Channel and iSCSI.

Storage Processor Enclosure – SPE (VNX5700/7500/8000 models) – This is the enclose that contains the storage processors on the larger VNX models. It is in place of the DPE mentioned above.

Storage Processor – SP – Usually followed by “A” or “B” to denote if which one it is, all VNX systems have 2 storage processors. It is the job of the storage processor to retrieve data from the disk when asked, and to write data to disk when asked. It also handles all RAID operations as well as Read and Write caching. iSCSI and additional Fiber Channel ports are added to the SP’s using UltraFlex modules.

UltraFlex I/O Modules – These are basically PCIe Cards that have been modified for use in a VNX system. They are fitted into a metal enclosure that is then inserted into the back of the Storage Processors or Data Movers, depending on if it is for Block or File use.

Control Station – CS – Normally preceded by “Primary” or “Secondary” as there are at least 1, but most often 2 control stations per VNX system. It is the job of the control station to handle management of the File or Unified components in a VNX system. Block only VNX arrays do not utilize a control station. However in a Unified or File only system the Control stations run Unisphere and pass any and all management traffic to the rest of the array components.

Data Mover Enclosure – Blade Enclosure – This enclosure houses the data movers for file and unified VNX arrays.

Data Movers – X-Blades – DM – Data movers (aka X-Blades) connect to the storage processors over dedicated fiber channel cables and provide File (NFS, pNFS, and CIFS) access to clients. Think of a data mover like a linux system which has SCSI drives in it, it then takes those drives and formats them with a file system and presents them out one or more protocols for client machines to access.

Disk Array Enclosure – DAE – DAE’s come in several different flavors, 2 of which are depicted in the quick reference sheet. One is a 3u – 15 disk enclosure which holds 15 – 3.5″ disk drives; the second is a 2u – 25 disk enclosure which holds 25 – 2.5″ disk drives; and finally the third is a 4u – 60 disk enclosure which holds 60 – 3.5″ drives in a pull out cabinet style enclosure. The third type is the more rare and are not normally used unless rack space is at a premium.

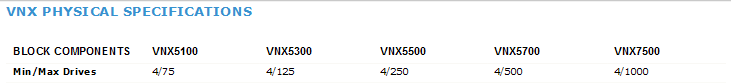

Maximum drives per System

I plan to update this article soon with the latest information, but I have noticed a LOT of traffic to this page so I thought I would include a quick contact form in case you have more questions or if you just need something clarified here. I think I may start doing this on some of my posts just because I don’t get emails that comments are waiting for me to approve or review. So hopefully this will speed up my ability to respond to any questions you may have.

Have a question or need more info? Let me know.

[mauticform id=”2″]

![]()

Again i highly doubt it.

I have not tried it… Im guessing probably not since the DPE can tell what type of shelf it is. Basically meaning that the firmware version on the shelf would be so out of wack it would probably just say no.

5200 Part numbers:

25×2.5″ Shelf – VNXB6GSDAE25F – VNXB 25X2.5 6G SAS EXP DAE-FIELD INST

15×3.5″ Shelf – VNXB6GSDAE15F – VNXB 15X3.5 6G SAS EXP DAE-FIELD INST

5300 Part numbers:

25×2.5″ Shelf – V2-DAE-N-25-A – 25 x 2.5 IN 2U DAE FIELD INSTALL

15×3.5″ Shelf – V31-DAE-N-15 – 3U DAE WITH 15X3.5 INCH DRIVE SLOTS

Hi, thanks for the write up and reference sheets!

I have a VNX5200 and a few questions…

The “Data Mover Enclosure” shown in the reference, should they be named SPA and SPB instead?

There are additional 1G etherent cards on each of the SP. Each card has two cat5e cables plugged to our managed switches. Each set of interfaces are in a port-channel.

Do the CIFS/NFS shares rely on the cables on the SPs, or on the cable of the CS’s management port?

Are the SPs in a active-active state or active-standby state?

Would there be any failover triggered if I unplug the cat5e cables of CS or SP? (I need to relocate all 6 cables to another switch)

1.) the data mover enclosure is where the NAS side of the system is, not the block side. SPA and SPB reference the storage processors in the “Storage processor enclosure” Datamovers are called Xblades or datamovers not SP’s

2.)CIFS/NFS rely on the ethernet cables plugged into the data movers, not the management cables that come out of the control stations

3.) I assume you mean the data movers as all your other questions are around the NAS functions. In which case the data movers can be active active or active stand-by depending on how you have them setup, but a NAS service like CIFS or NFS can only reside on one, and if that one goes down it will fail over to the other data mover.

4.) Maybe. If you have the networking on the datamovers is setup in a fail safe mode it can cause a failover to the other data mover. but by default a network connectivity loss will NOT cause a service failover

Let me know if that doesnt clear up your questions

Are CS, DM, SP have Harddisk ?

Where are Unisphere soft / Block OS / File OS located ? ( is it in 0-3)

CS – they have local internal hard drives that hold their linux operating system

DM – Data movers have internal storage on their controller from what i understand.

SP – they use the vault disks 0-3 in the DPE enclosure

Unisphere software runs either on the storage processors if the array is block only, or on the control stations if it is a unified array.

The Block OS or “FLARE” lives on the vault drives, the file OS lives in the data movers

does that answer your question ?

You have to remember that the block side of any unified or file only array could live by itself as just a block only array… as long as its not already fused to the file components. If you have the right commands to reinit the array, and then reprovision it from the ground up… you can actually unplug the CS’s and DM’s and make the array block only… so that is why i say that each components os lives on it, and they are not all contained on the DPE.

How to explain file base VNX storage

not sure what you mean ?

Hey there, Thanks for the reference sheets and explanations!

We have a customer with VNX5500 (- they have acquired new storage and are planning the migration.

Now the nightmare will come when CIFS/NFS shares will need to migrated over 1 Gb network.

As previously stated “CIFS/NFS rely on the Ethernet cables plugged into the data movers”

Now on the Datamover there is a 4 port 1Gb I/O module (2 ports used, and 2 not used)

can these 2x free ports be used to create a link/network directly attached to a windows file server cluster so we can bypass the current network to increase the copy speeds (if so… can we maybe install a 10Gb I/O module and achieve the same?)

or what other option do we have?

You should be able to link directly to a server as long as you setup the ip addressing properly. So for a direct connection you could use a /30 subnet.

you ill also have to do some configuration on the vnx datamover to associate those ports with it.

how much data do you plan to move? seems like a lot of money just to migrate… especially when you could just use emcopy to do an initial sync, then follow up on a cut over day with a final sync…

Hey, thanks for the speedy reply! 🙂

Ok so it is possible to use the 2 extra 1Gb ports to setup a direct connection.

do you have more details on the steps to follow for the configuration on the Datamover to associate the ports?

also we need to migrate about 24Tb of data (cifs/NFS) – we currently testing emcopy but only getting a transfer rate of 10-13Mb/s 🙁

Also the 10GB module we might be able to get it on a loan basis until we are done with the migration. so no money spend 🙂

http://www.uccx.net/wp-content/uploads/2012/06/VNX-Networking.pdf

bottom of page 33 should be what you want.. its CLI based though. Im at a conference right now with no easy access to an array to see what the GUI would look like.

Pingback: Interconnecting EMC VNX5200 | vNoob

We are looking to extend the storage on our VNX 5500, we want to extend it by 45 TB of raw storage. Do you have recommendations on reputable companies we can go with. Or a good online store if we buy direct?

Any of the major online retailers can scope and sell you an upgrade. The company I work for can also do it too.

Biggest thing is that whoever you really want to buy it from is the first place you should contact, due to the deal registration process… (basically whoever you mention the upgrade to first will register the deal… from there that reseller will have a huge advantage over all other resellers.

I have a question regarding disk enclosures for NAS system. What do we call an extension enclosure for NAS & SAN (File & Block). what is the maximum no of file a block extension enclosures we can add.

—————I have a question regarding disk enclosures for NAS system. What do we call an extension enclosure for NAS & SAN (File & Block). what is the maximum no of file a block extension enclosures we can add. ———————

Disk enclosures are called DAE’s in EMC Speak (Disk Array enclosures)

the maximum number of enclosures depends on the model of array you have…. but generally … max number of drives in the array divided by number of drive slots in the DAE’s = the number of DAE’s you can attach… obviously this gets more complex when you start mixing DAE types.

Can VNX5100/5300 ‘s 600G SAS HDD (VNX51/53 600GB 15K SAS UPG DRV 15X3.5 DPE DAE)

P/N: V3-VS15-600U

suitable for VNX5200/5400 use?

nope.

Regarding last question about using VNX5300 SAS drives in 5200/5400 VNX.

Yes, it is possible but requires EMC checking and special approving.

We use our VNX 5300 DAEs with new 5200/5400 systems.

Thank you Yuri!

I had not been aware of this.

Hi Justin,

I was recently just asked a interview question on the phone on what is the name of the hardware component that the SPA\SPB currently reside in for a VNX Unified. I was honest because I am realty not a rack and stack guy and primarily more a UI admin but just wanted to be prepared in case the same question arose again.

Thanks again,

Nick

Some call it the Storage processor enclosure (SPE) and others call it the DPE or Disk Processor enclosure….

Reason being is that on the smaller units SPA and SPB are in the back of a disk shelf, while in larger units they have a dedicated shelf just for the SP’s

Hi Justin,

What is the diagram tool you used to create these graphics in the post, is it creately ?

Nope, MS Visio.

So…here’s an interesting one…

I bought some VNX 7500s, but I can NOT get them reset in order to connect to them.

It looks like the Control Stations are bricked…but that’s fine…

I’d just like to connect to the SPs and try to get a look at something…anything…

But the default IPs won’t work…no ping…no Unisphere, even after a full reset.

Probably something stupid…any thoughts?

Thanks in advance.

Interesting. I cannot post my longer and actual question.

I get an error message that is is a duplicate post that I have already written.

So, this is just a test to see if I can post at all.

Hey Seth,

Just FYI I have to approve all comments before they get posted, which is why it said dupicate post … because your first was one was waiting for me to approve it.

Justing,

I need to mix sas cabling , i have a VNX5400 ( DAE 25 SAS AND DAE 15 NL-SAS) , is not problem mixing in the same stack??

nope, you can mix and match shelves, just make sure you have them on the same BUSS and at the same position in the buss

so like if the shelf is on buss 1 and shelf 3 for example on the A side…. make sure its the same on the B side too.

Justin,

Thank you for this blog and the download – they are excellent!!!

I have 4 Disk Enclosures…two are CX500 with FLARE/SP’s, and two are DAE’s with no vault drives (hence no storage processors).

I noticed that the two DAE’s without vault drives have labels on the power-supplies that read “do not use for DPE CX500”.

QUESTION(s):

1. Isn’t it true that the DAE becomes a DPE once you add the Storage Processor cards?

2. If I were to place backup copies of the vault drives into a DAE’s, and THEN also add a Storage Processor -perhaps a CX300…~do you have any thoughts or concerns about this power supply configuration?

Thanks!!

Jason

Justin,

Isn’t it true that the DAE becomes a DPE once you add the Storage Processor cards?

Thanks for the great blog and documents/info!!

Jason

DPE’s and DAE’s are certainly not the same.

DPE’s have a lot more traces on the back planes that hook the SP’s to the front disks… that being said they are also longer too.

in fact storage processors cannot be inserted into a DAE… the sheet metal just isnt long enough.

DAE’s only hold disk drives and must be attached via a sas or fiber channel cable (FC only for the CX series sas only for the VNX series) to a DPE.

Is there a limitation to the number of DAEs you can put on each BUS?

Justin,

I have two CX500 arrays (with Flare) workign fine, and 2 DAE’s with just blank drives. I noticed that the power supplies on the DAE’s say “do not use for DPE CX500”. …NOTE: it says “DPE”…NOT “DAE”!

My question:

1. Isn’t a DPE really just a DAE -> except it has SP’s installed?

The reason I ask is because I have extra SP’s, and I was wanting to expand the DAE’s into usable standalone arrays….(note: I have vault drives as well, I am not sure if the SP’s are CX300 or CX500…I will certainly make sure I don’t use them if they’re CX300 in this case, but…just curious if I’m missing the point on the “enlcosure” being either a DAE which it’s labeled, but for all intents and purposes is identical to the CX500’s already built and operating.)

Thanks in advance!!

Yup.

This is determined by the model of the array. But generally its a few more shelves then what is required to hold the maximum number of disks.

So for example a VNX5300 can hold 125 drives max and has 2 bus’s. I also have the option to use 12 bay DAE’s or 25 bay DAE’s

So if i bought 25 drive DAE’s the most a system would hold is the 1 DPE, and 4 expansion DPE’s if they were all 25 bay units.

If i used 12 bay units i could have the DPE as well as 10 additional DAE’s.

And lastly if I mix 25 and 12 bay DAE’s then all bets are off as you will certainly have more than 125 bays for disks… but the system will only recognize the first 125 disks that are inserted.

Justin:

Can 600GB 15K RPM drive DAE’s from a 5300 be ported to a 7500?

Thanks.

shouldnt be a problem, just make sure to check part numbers on the hcl list that emc publishes

Hi Justin,

We will be implementing fast cache in Vnx7500.

We have 15 Slot DAE with 232* 600GB SAS disk

56* 100GB flash disk and 30*600GB SAS disk for spares.

Getting confused how to setup the disk layout . Any help would be great .

well fast cache is always raid 1. you can have up to 1500GB on a 7500, so that means you could use at most 30 of your ssds there, i should mention though that there IS a difference between SSD’s for fast cache and those for “normal” use. So you might want to check part numbers on them.

As for the other disks it really just depends on what you want to do. You could maximize for capacity or speed, or do a mix of both. One thing to remember is that once you put a disk in a pool or raid group the only way to get it back is to delete that whole pool or group. So I would say that you should start with a smaller amount of drives per pool and then add drives to those pools when you need more speed or capacity.

Hi Mr. Justin,

We are going to use VNX 8000 in our new project.So kindly let me know more about VPLEX, SPE and BE.I think DME and BE are same right?

I am expecting your reply..

Thank you

Vaishakh

Check here: http://davidring.ie/2013/09/11/second-generation-vnx-rockies/

David has a fornt and a read of the VNX8000 on his site.

as far as connecting to VPLEX …. VPLEX connects into the fabric just like any other server would.

For Yuri ‘s answer to my question:

Regarding last question about using VNX5300 SAS drives in 5200/5400 VNX.

Yes, it is possible but requires EMC checking and special approving.

How to get special approval? By professional service?

Thanks.

In our case I talked with our pre-sale EMC guy and he sent request in order to get special approve. But first he checked spcollect to be sure that it is possible.

Hi Justin,

Thank you for taking the time to answer this strange question:

Is it possible, on a vnx 5200, to only use DME and DPE (without DAE and CS) ?

And is it possible to connect directly SAN to servers with FC ( without FC switch) ?

It is possible to connect a server directly to the DPE without a switch. This is called Fiber Channel Arbitrated Loop or FCAL mode for short.

On a VNX5200 you have to have the control stations in order to control the DME. DAE’s are just expansion shelves so you do not need to have DAE’s… DPE is the core of the block side of the array though so it is required for block operations.

So in summary for block operations:

DPE is required only

for File or Unified operations: (all of the following are required)

DPE

CS0

DME

We have a 5300 Unified and we are not using the FILE component at all. We do not have any plans to use it either. I want to connect the ESXi hosts to the VNX directly with fibre. I will need to use all 4 fibre connections on the SPs. One of these ports are currently connected to the data movers. I assume I can just “unplug” the fibre tails to the data movers?

Your comments are much appreciated.

Do you have a VNX 5200 Unified and File Only cable diagram? I don’t see one above. Thanks.

Do you have a VNX 5200 Unified and File Only cable diagram? I don’t see one above. Thanks.