One of my upcoming projects is to install a new EMC Clariion AX4 iSCSI SAN for a customer who currently has an aging AX4 that only has SATA storage. The new SAN has 4 – 300GB drives for the vault drives, and 5 – 600 GB 15k SAS drives. While this isn’t completely apples to apples (my loaner P2000 only has 146Gb 10k drives) but its as close as I can get with my current budget (which is $0) haha.

Anyhow the Clariion AX4 is pretty easy to setup, it took about 30 minutes to rack and stack it and its redundant power supply; and only like 5 minutes to cable it… much different then its Celerra sister that I setup a few months ago.

The test environment is my VMware lab which consists of an ML370 G5 with 22GB of ram which will provide the CPU/RAM resources and then we are going to hook both an EMC Clariion AX4 and an HP P2000 SAN via iSCSI to it. The iSCSIwill be multipath from the server to the SAN with 2 – 1Gb NIC’s from the server to switch and 2 – 1Gb ports per SAN controller to the switch. Because we are just using the VMware native multipathing and not EMC Powerpath we will basically be limited to 2Gb of throughput (theoretical).

So first up lets see whats in the AX4:

| AX4-5I | 2U Dual SP DPE ISCSI Front End W 1U SPS | 7185 |

| V-AX4530015K | 4 – 300 GB 15K 3GB SAS Disk Drive | 2670 |

| AX-SS15-600 | Qty 6 – 600GB 15K 3GB SAS Disk Drive | 7620 |

| AX4-5SPS | Second SPS Optional | 690 |

| AX4-5CTO | Factory Config Services AX4-5 DPE / DAE | 25 |

| Total | 18190 |

For those of us who don’t speak distribution… we have:

- 2u shelf which has dual iscsi controllers and a 1u standby power supply

- 4 – 300GB 15k rpm SAS drives for the “Vault”

- 6 – 600GB 15k rpm SAS drives

- additional standby power supply module (this means one for each controller)

- Factory configuration (ie, they put the drives in the shelf and load the OS)

Setup is pretty much identical to the P2000, give it some management IP addresses, then give it some iSCSI host addresses, then configure a virtual disk, and a logical volume and present it to the initiators. Clearly a nutshell howto, but this is a performance post, not a setup post.

Compared to the P2000 the only two differences between the two for our testing purposes is that the EMC has 15k drives instead of 10k, and they are 600 GB instead of 146GB. Other then that I have tried to keep this as close as possible.

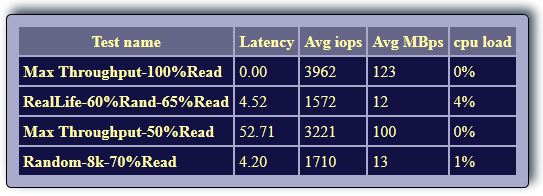

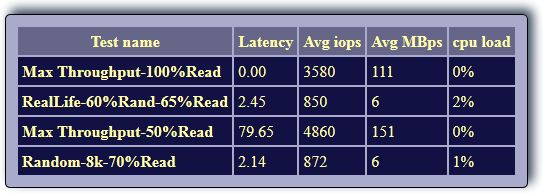

I have based this testing off of the IOMeter configuration files on VMKTree, Here are the results for the EMC:

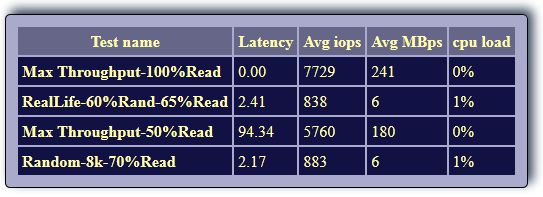

And just for fun I took a look at what the Fiber Channel interface could provide, even though I only have a 4Gb HBA:

To make things really easy to see I used my advanced Excel skills and make some bar graphs.

To perform these tests I created a Windows 2008 R2 virtual machine on my ML370 G5 server and installed IOmeter on it. Then I tested the first SAN, then I storage vMotioned the virtual machine to the other SAN, and left it settle for a little while, then ran the same test again on that san.

My conclusion is that it would be pretty hard to decide which way to go. I think that if the HP P2000 G3 had 600GB 15k RPM drives it would be faster in every category tested, but as configured it is still right on par with the EMC, except when using Fiber Channel, in which case the P2000 wins (No surprise though because of how much superior FC is). If I were evaluation both of these SAN’s to meet a basic iSCSI storage need for my business, I think that I would buy strictly based on price and things like warranty coverage and whatever other stuff I could squeeze out of my sales guy.

Two other things that I would consider is 1.) how many esxi hosts do I have (if less then 4, why not go with the P2000 G3 SAS and save some $$$), and 2.) what kind of deals will EMC offer on the VNXe series that just came out? My only reservation on the VNXe is that the P2000 and Clariion is simple iSCSI block storage… that’s all it does… and they both do it well… so do I really want to add in NAS features.

Just my 2 cents.

![]()

Pingback: Tweets that mention HP P2000 G3 vs EMC Clariion AX4 | Justin's IT Blog -- Topsy.com

Justin,

Depending on what you’re going to put on top of your storage, in a VMware environment with multiple VMs accessing the storage at the same time, the EMC SAN actually won here (for most use cases).

Random IOs are much more important than sequential IOs. You will only see something close to sequential 100% read during backup and 50% read during large file copies within the same system.

For other types of load, and especially on database servers such as exchange and SQL the access will be of a random type. With multiple VMs and multiple ESX hosts accessing the storage at the same time, the access will be random to the storage system even if you don’t run database servers.

Lars

Lars, Thanks for the great IOmeter profiles!

Also I agree that IOps are the part to be looking at. One other factor that would push me toward the EMC is the VAAI support that all EMC SAN’s offer. I don’t believe that is out yet for the P2000.

What about IBM’s DS3500 product? Seems like that would certainly be a contender in this space? Anyone have real world comparisons?

Justin, thanks for all of the great info about the P2000’s in this and other posts. We are probably getting ready to buy a P2000 and three DL360’s to run vSphere Essentials Plus kit. I had speced out the 8GB fiber model, but after reading this article I’m wondering now if SAS would be just as fast and more cost effective. We currently have no existing SAN or fiber infrastructure and the only thing that will be connected to the SAN are my 3 VMware servers and a backup server. Would SAS be a better option for me than 8GB fiber?

If you plan to expand beyond those 3 hosts within the next 5 years then No, Fiber Channel would be a better option. Also if you plan to later upgrade to an EVA, or an EMC or something else that is fiber channel you could hook the P2000 into that infrastructure.

How many VM’s do you have? Do you see an explosive growth in the next 5 years ?

Also if you are willing to have a non redundant path to the SAN then you could support up to 8 hosts on the P2000 SAS… so as long as you think you could get by for 5 years on 3 hosts with redundant or 7 hosts non redundant paths then the SAS will save you money and is much simpler to setup. But if you have the cash for fc switches and stuff then you will have more options in the future.

For the last 3 years we have just a standalone DL380 VMware ESX server with about 20 non business critical VMs. Now the goal is to buy two new DL360’s and upgrade the ESX on the DL380 and run the Essentials Plus Kit that is limited to 3 physical servers and virtualize the business critical servers (Exchange, CRM, Intranet, and accounting). All of those services are currently running on ML350 G3 or G4’s with local storage so I would think that moving them to a VMware cluster with 32 cores and over 100GB of RAM would be fine. I definitely do want to keep the redundant connections to the SAN. Given the fact that we’ve been able to get by with our enterprise services running on servers that are 8 years old I definitely see this equipment serving our needs for the next 5, unless we test VDI and that really takes off, but if that turns out to be the case I may want a seperate P2000 down the road for VDI anyway.

From a performance standpoint is SAS as good as fiber? Is it any more or less work or trouble when considering what I will use it for?

BTW, if I can save close to $10k from not having to buy a fiber switch and 4 fiber HBA’s I may be able to buy Veeam. Otherwise we’ll have to try to make do with BackupExec and the APIs built into VMware. This is how we’ve been backing up our standalone ESX server for the last three years 🙁

There is really nothing to configure with SAS… so its a lot less work IMO. and there may be a little better performance with Fiber Channel, but you wont see it until you get up into the 300-400MB/s area… which will require a lot of disks… chances are the number of spindles or the controllers will be the bottleneck before the fabric.

Sounds to me like you need to go SAS then! LOL

The Fiber HBA’s alone will be enough to offset the cost of Veeam!

Justin, thank you for all of your information. It really helped me make my purchasing decisions. Earlier this week we purchased a P2000 G3 SAS, a couple DL360s, VMware Essentials Plus, Veeam Essentials Plus, and some other items. The P2000 will have (12) 450GB 15K LFF drives in it. We actually need about 1.5TB of usable storage and our growth is fairly slow (probably about 500GB or less in the next 2 yrs). How would you recommend we setup the storage? RAID levels, number of vdisks, size of LUNs, etc? Our largest VM will be around 300GB (Exchange), but most will be significantly smaller. We will also have a couple versions of MS SQL. The biggest DB is probably about 30GB.

Honestly I’m usually not a big fan of blogs, but yours has been EXTREMELY valuable to me, and I can’t thank you enough for all of your “real world” (not sales hype) information.

Oh, one more question… I will have 4 servers hooked up to the P2000. 3 VMware servers and one backup server. I have the dual SAS controllers and will hook up each server to each controller. Can I also use redundant HBAs in my servers or do I have to hook up each controller back to a single HBA in each server?

If you only need 1.5TB… and only estimate 500GB of growth here is what I would do.

Take 1 drive and designate it as a global hot spare

Take 4 drives and create a vdisk for a RAID 10 logical volume (for databases or anything High IOps)

Take 7 drives and create a vdisk for RAID5 then inside of that vdisk i would create 3 x 1TB logical volumes to present to VMware

So you would have 4 datastores total

1 – RAID 10 at about 850-900GB

3 – RAID 5 at about 1TB each (3TB total)

As far as hooking your HBA’s up. I would run one cable to each of the HBA’s. You wont be able to do round robin multipathing, but that should not be an issue as you will still have 6Gbps to the SAN! LOL

I’m working on a customer right now that has this exact same setup and im pushing over 240MB/s from the SAN to a backup server and another ESX host… they are strong boxes 🙂

Hi,

We are looking for the exact same setup, also having 15-20 VM-s, the biggest SQL db is around 130GB. I was thinking of making a full rack RAID50 for a 4,5TB usable space and have 2 disks to pop in, in case of failure. That way the array would be bigger and serve VM-s better, however I’m not sure how it will behave with totally random workloads compared to several raid groups.

the VNXe series is limited on the number of drives in certain types of RAID configurations. Just make sure to verify with your VAR that what you want to do is supported before you buy. Also for your database you would be better off doing RAID10 volumes in most cases, as RAID50 will have a lot more write overhead.

Your best bet would be to create several raid groups each serving separate datastores so that workloads are fairly separated.

Oh, I missed to specify I was talking about HP P2000 G3 SAS with 3,5″ disks and doing RAID50 across 12 disks

Pingback: AX4 vs P2000 G3: 15k vs 15k drives | Justin's IT Blog