This is going to be the first post in a series on how I am building and testing my vCloud. I will be using vSphere 5 and will opt for the Linux vCenter appliance as well as ESXi 5.0 and the latest beta versions of vShield and vCloud Director.

Hardware

Lets first talk about the hardware setup that I will be using along with the general layout template that I will try to follow. VMware has published a doc on how to build a public multi-tenant vCloud here, this document will be the roadmap for my test lab.

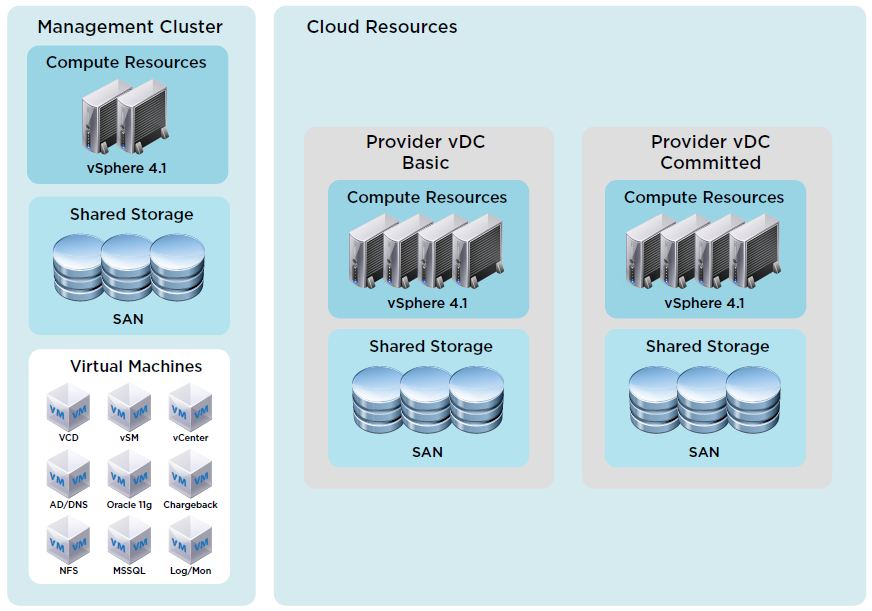

Here is the diagram from the above-listed document that explains the main hardware overview:

There are three major groups of hardware in the diagram, however, my tests will only consist of the “Management Cluster” and the “Provider vDC Basic”, since this is just a test I won’t be using two Provider vDC’s. Also for the management cluster, I will be using just one ESXi 5.0 host instead of two because I don’t need HA at this time. My “Provider vDC” will consist of at least two DL385 G2 servers with Fiber Channel storage to my MSA1000 SAN. Obviously, none of this hardware is going to be screaming fast, but it will work great for what I want to test.

So here is what we will have:

Intel Xeon based ESXi 5.0 Host for Management (local storage):

- vCenter Appliance

- vShield Manager

- vCloud Director

- Chargeback Server

- Oracle 10g XE DB Server

HP DL385 G2 Cluster (at least 2 hosts):

- ESXi 5.0

- vDistributed Switch (Customer VLANs)

- Standard Switch (management)

- HA, DRS

- Fiber Channel Shared Storage

![]()

Very interesting concept! Would this demo lab be powerful enough to host 3-4 not demanding customers as a “proof of concept” exercise?

The way I see it, if you can build this, and then advertise it as a vCloud powered offering, you could buy more servers for the vDC, and also change from 1 host for management to a redundant management cluster (as in the original doc)

Were you happy with the results of your test?

Also how would you calculate what wan bandwidth pipe to start with? maybe 20MB/sec would be a good start?

what do you mean? Do you mean how much would you need to be a provider or something ?

Yes I was happy with the results. As a matter of fact if you go over to http://tsmith.co His blog is actually hosted on our vCloud lab. I have also done exchange and other demos on it. But it is connected to the net via a multihomed pipe with > 100Mbps So it works very nice.

Yes I mean could someone start with a 10Mbit dedicated wan connection as a start, or would more bandwidth be required? when renting vDCs to customers, what kind of bandwidth would they expect? I havent found any guidelines about this in the VMware toolkit! Thanks again!

wow the blog you linked loads very fast indeed.

last question would you install Veeam for your vCloud in a VM or would you install it on a physical host direct attached to your SAN like described in your blog post?

It really depends on what the situation was… Normally for a customer setup i like doing the virtual appliance model these days. But for something like vCloud I would probably do a san attached…. because in my situation as a service provider we have to pay for any VM we turn on.

It really just depends on who your selling the service to and what they plan to do with it. If it were me i would just make sure to do good discovery with potential clients and have a really good relationship with your upstream ISP. At SMS we know them well enough that if needed we could call and have out speed bumped within hours up to 1Gbps.

Justin can you provide some additional details on the hardware that you use for the ESXi 5.0 host in the Management Cluster? Can you tell me the CPU series e.g. Xeon 5400, the number of cores, and the amount of memory? Can you also tell me how much memory you are using in the HP Servers? Word on the street is that it takes about 20GB of memory for a vCD home lab.

My home lab currently has 4 HP G5-series servers. They are Intel 5300 or 5400 series processors, the largest one has 48GB of ram and the smallest has 10GB of ram. But for a functional vCloud home lab you will need a decent amount of ram.

The defaults or recommended settings will probably add up to more than 20GB…

vCenter Server – for production i used 12-16GB on 5.1 if the web interface is installed

vShield – 8GB by default

vCloud Director – 2GB

SQL Server – 16GB for production … can get away with 4GB though

at a minimum i would say you need about 12-14GB

the vCloud install i am currently running is using about 20GB for vCloud Director, SQL Server, vCenter, and vShield.

Pingback: Building a VMware vCloud Director (vCD) 5.1 Nested Lab: Part 1 – Hardware | trainingrevolution