Over the weekend I was asked to determine why all virtual machines on a cluster were shutdown at 9:45AM on Sunday. There had been no storms, and the datacenter where this cluster is located has good cooling and great power, so there was really no reason that the VM’s should have went down.

After I logged into vCenter the first thing I noticed was that the two hosts in this cluster had red alert icons beside of them.

So the second thing I did was navigate over to the Alarms tab of one of the hosts, inside of there I found two alerts, the first was “Network uplink redundancy lost” and the other was “Network connectivity lost”.

Let me back up for a second and explain the three most common ways a virtual machine ends up in a powered off state.

- A user or script initiates a power off command

- A host server shuts down or fails unexpectedly and VM’s are not set to automatically restart.

- HA powers off the virtual machine because of host isolation.

Since this is a production environment, and because myself and only a few other people have access to this cluster, I am going to rule out the first option, The second option would have been my first guess normally but there are indicators that disagree with this theory. For starters if an ESXi host unexpectedly powers off there is no time for it to detect that it lost network redundancy or connectivity… it just goes down. And the even bigger indicator is that both hosts were showing 37 days of uptime on the cluster “Hosts” tab.(see image above)

I think that pretty much rules out the second option too, so now I’m left with Host Isolation. Let me take a second to give Duncan and Frank’s “vSphere 4.1: HA and DRS Technical Deepdive” book a plug… if you really want to know how HA and DRS work… pick up a copy. Anyhow the short version is that when HA is enabled it asks you what you want done to virtual machines running on hosts that become isolated. This means that if a host can no longer talk to other hosts in the cluster for any reason (be it that the host lost a network card, or a switch or whatever) what do you want it to do with the vm’s that are running on it? Well the idea behind HA is that if you lose a host we want those VM’s powered back up on other hosts right ? So if a host becomes isolated, by default, it shutdowns down vm’s running on it, so that when the other hosts in your cluster determine that its down… they can restart them.

In this situation both of the ESXi servers in this cluster were using a single switch while some datacenter migrations take place, and it just so happens that the switch went down. In turn, VMware HA kicked in on both hosts and both hosts determined that they were isolated… which means they powered off all of the virtual machines. Then when the switch came back online none of the virtual machines were powered back on because the hosts were unable to find vCenter and were unable to get an update on what was going on. (because vCenter was a virtual machine that was powered down)

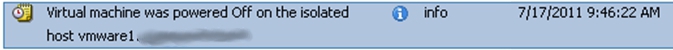

To confirm this theory I checked the “Events” section on a virtual machine and found this event which confirmed my theory:

The moral of this story is to make sure that you have at least two switches in your environment. Even if the second switch is just a dumb, unmanaged switch… get it hooked to a network card on each of your hosts and configure just management traffic to run across them. This will help to prevent things like this from happening. In the future vSphere 5 will utilize a “heartbeat datastore” to further reduce false positives and increase uptime.

![]()