I’ve done several posts on how to design a cost-effective SMB cluster, but recently with the discovery of the HP P2000 G3 SAS SAN it has gotten MUCH more cost effective. While the HP SAS SAN is not entirely new, the updated bundles and features provided with the G3 model have really made it a viable option.

If you haven’t already seen my other articles I would encourage you to add these to your reading list. If you browse through them you will see the general design and the price point, this will help you to understand the added simplicity and lower price point of the design that will be presented in this article.

http://jpaul.me/?p=402 –Recipe for SMB Cluster’s (version 1.0)

http://jpaul.me/?p=869 — HP P2000/MSA G3: A First Look for SMB’s

In the first article I presented a design that utilized an iSCSI version of the P2000 SAN, while still a great SAN, it requires you to have two switches for redundancy. Depending on the switches you get this can add a significant amount of money to the bill. This also means that you need to configure separate VLAN’s for iSCSI traffic as well as rely on more devices that could fail.

Enter HP P2000 G3 SAS… it will be the key to version 2.0 of the SMB Cluster Design.

This article is going to be a little different from the first version though… I’m not going to go through the year by year building process, and I’m not going to lay out all of the costs or the software required. This article is strictly about the hardware, and how it’s going to save you money over the version 1.0 article. So to get started go read that first link, then come back to this one.

One of the largest costs involved with the version 1.0 of the SMB cluster design is the network side… meaning the Cisco switches. You could have cut them out in favor of something cheaper, but you would have probably created a single point of failure on the iSCSI side of things. With the SAS version of the P2000 we no longer need network connectivity for iSCSI because our hosts are directly connected to each of the SAS controllers. The downside is that we only have 8 SAS ports, and if you want redundancy then you can only connect up to four hosts. Since we are talking SMB, we will assume you will have no more than 3 ESXi servers because the VMware SMB packages only allow licensing for 6 processors anyhow.

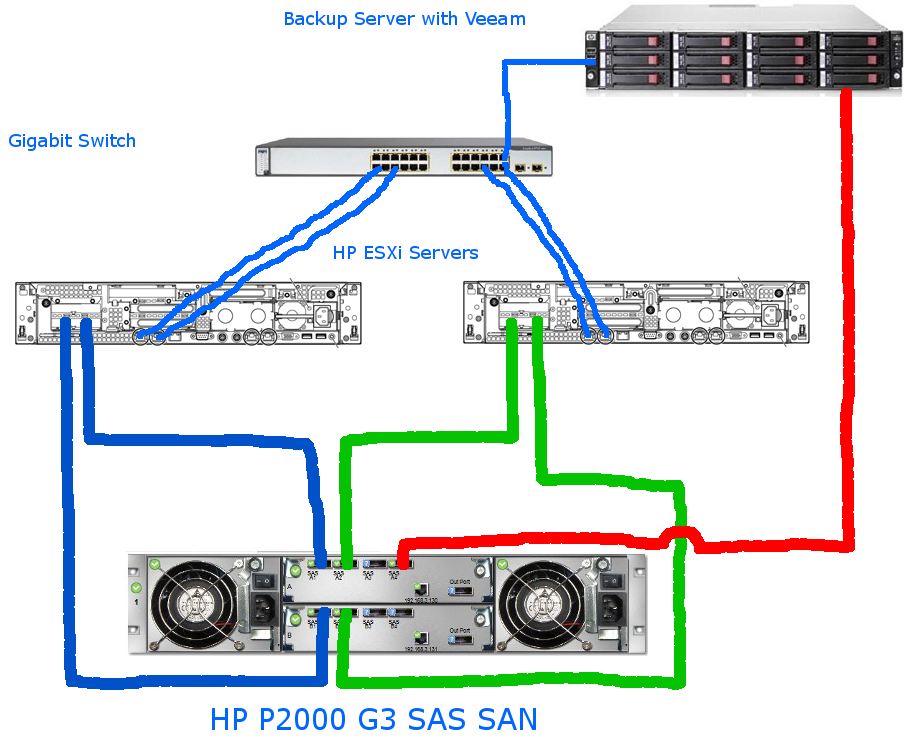

So let’s take a look at what our infrastructure needs to look like: (excuse the drawing… it seems as though Visio isn’t on my laptop right now)

This drawing basically shows how the SAN connects to both your ESX servers as well as your Veeam Backup server. Everything is SAS, the only reason we have ethernet is for management of the SAN, and other normal network traffic from servers to clients. This setup still allows you to do “Direct Attached” backups with Veeam too.

Data transfer rates are MUCH faster than with iSCSI, in the order of 300-400MBps, and Direct SAN backups are at about the same rate… the only thing that slows it down is the CPU bottleneck in the Veeam server. If you were to turn off compression you would get the same rate most likely.

While the picture shows a Cisco 3750 switch, you could easily use any gigabit switch… and you really only “have” to have one. Yes, it is a single point of failure, but if its only programmed as a simple L2 switch you could just have a cold standby in case of a failure.

So does it support HA and DRS?

Yes. The SAS SAN fully supports all of the features of VMware. Right now the P2000 line does not include VAAI support, but I’m told they are working on the code, and I’m hoping to get to test it out! (I’ll be sure to blog about it if I get the chance to test it)

To the VMware servers the HBA’s show up just like a Fiber Channel HBA would, and they list out the controllers and any LUN’s that you have presented to it.

So what the bill?

Well the SAS san comes in a few thousand dollars cheaper than the iSCSI San. In the first article, I used $20k as a generic amount for the SAN and I think I based that on a couple TB of usable space… with the SAS P2000 G3 you can get the SAN AND 24-300 GB 10k SAS drives bundled for $18k (MSRP)! That’s 7.2TB of RAW space!

You will also need HBA’s since we aren’t using ethernet, but they are only $200 per card. SAS cables are about 120 bucks each as well… and you’ll need two per server and one for the Veeam server (two if you want it to be redundant too). So while there are some added costs to go with SAS on the connection side, the savings are high enough that it not only offsets the cost of the cables and HBA’s, it also still brings the total price in lower then with an iSCSI SAN.

If you want to compare it to the Fiber Channel SAN then you are almost in two different ballparks. The HBA’s alone for the 8GB Fiber Channel are $1200 each. Then figure a couple grand for fiber channel switches (you’ll need two), plus fiber cables. Total your talking alot more than the SAS SAN. So even if the Fiber Channel P2000 was the same cost as the SAS (which it’s not) you would still have an additional 10k (estimate) in all the additional Fiber Channel gear you need to make it work.

As for the Veeam Backup server, there are a lot of tricks you can do with an HP server to bring the price of “big” storage for the disk to disk backup down. Maybe one of these days I will do a post on how we do it.

![]()

Justin –

You are the man. This is exactly what I am looking to implement. For some reason though I keep coming back to the VNXe. Completely different (iSCSI vs SAS), but similar target markets. Are there any advantages one way or the other?

Would love to see that Veeam/HP backup server article.

Well with the VNXe iSCSI you can utilize existing gigabit infrastructure… you can also connect it to more then 4 hosts. The downside is the same thing that makes it flexible…. ethernet. You are still only going to have 1Gbps pipes unless you go out and buy PowerPath.

With the HP SAS SAN you are going to get 6Gbps on each pipe, and you are not required to have a gigabit switch. You also dont have to worry about setting up Jumbo Frames on your vmware servers, switch, and san. or have to worry about getting a switch that can handle the packet load for iscsi traffic…. basically there are alot more variables, all of which can be overcame if you know what your doing, but for simplicity the SAS san is definetly the way to go.

Good news. I have a VNXe at the office right now, so there should be a review coming soon

Pingback: HP P2000 G3 SAS SAN Review | Justin's IT Blog

Hi Justin,

First, thanks for posting these great articles! They are very helpful and insightful!

My question is with regards to the P2000 G3 SAS SAN. Hooking it up to a bunch of HP Proliant DL360 G7 Servers, what SAS controller would I use in the DL360 G7’s? Any suggestions? I want to of course use this solution for SMB customers running VMWare ESXi and have the benefits of HA, vMotion, etc…

Thanks, let me know when you get a chance to reply.

Deepak

You want this one right here : http://h18000.www1.hp.com/products/servers/proliantstorage/adapters/sc08e/index.html

also there is a specific driver for teh sas cards for esx and esxi http://downloads.vmware.com/d/details/esx4_lsi_mpt2sas_dt/ZHcqYnRlcGpidGR3

let me know if you have issues.

Hello Justin, great website. I wanted to ask if your setup would allow offsite replication of the SAN to another SAN in a DR site across a private WAN. Perhaps using Microsoft Data Protection Manager?

Well if you’re going to use the P2000 G3 SAN you could use it to replicate your LUN’s. Or you could use Veeam to replicate your Virtual machines in a more consistent state. I dont know anything about Microsoft Data Protection Manager, so I cannot comment on it. There are lots of options though.

Thank you for the response! I also wanted to ask if the P2000 G3 can do tiered data? Can I mix 15K RPM SAS drives with 10K RPM SAS and 15K SATA drives and have the SAN do smart storage? If not, can you make a suggestion on which HP SAN can do this?

Hello,

did you try this ?

HP P2000 Software Plug-in for VMware VAAI vSphere 4.1

http://h20000.www2.hp.com/bizsupport/TechSupport/SoftwareDescription.jsp?lang=en&cc=us&swLang=8&mode=2&taskId=135&swItem=MTX-d1d3b1c87a754e5b89ac0c69a9

Marc

I haven’t had a chance to update the SAN that I have access to, and normally customers do not have us come in and keep all the firmware up to date. I will shoot you an email and let you know how it goes, but this is a pretty awesome feature to have.

Hello guys! I am looking forward to implement a Document Management solution for 150 users. The hardware setup looks like this, two DL380 G7 servers (6-Core XSeries Xeon), an HP P2000 storage solution with two SAS controllers, 4 ports each, just like one from the uppers side of the page. We’ll use ESXi on both servers, 4 VM’s per each DL380. VM’s are gonna be stored on the HP P2000. storage. The problem is SAS bandwidth, becouse 2 VM’s are gonna use intensely the HP P2000 (SQL Server and Indexing Machine). I understand that i can benefit of one SAS connection per server and another one for redundancy (i hoped that i can assign a SAS port for a VM, but from your information this is not possible). All in all my question is the following. My setup will gonna be enough for 150 users or should i raise the level of which storage solution i will choose. Is HP P2000 capable to give us a decent performance without bottlenecks.

Hello guys! Sorry for my last comment. Just validate this one if it’s possible, this one i think is easier to understand 🙂 I am looking forward to implement a Document Management solution for 150 users. The hardware setup looks like this: two DL380 G7 servers (6-Core XSeries Xeon), a HP P2000 storage solution with two SAS controllers, 4 ports each, just like one from the upper side of the page. We’ll use ESXi on both servers, 4 VM’s per each DL380. VM’s are gonna be stored on the HP P2000 storage. The problem is SAS bandwidth, becouse 2 VM’s are gonna require FAST I/O (SQL Server and Indexing Machine from our Document Management solution). I understand that i can benefit of one SAS connection per server and another one for redundancy (i hoped that i can assign a SAS port for a VM, but i informed myself and this is not possible). All in all my question is the following. My setup will gonna be enough for 150 users or should i raise the level of which storage solution i will choose. Is HP P2000 capable to give us a decent performance without bottlenecks?

Do you know the specs on the SQL server’s traffic ? How many IOps will it require? How large are the queries ?

I would say that a single 6 Gbps SAS connection is your best bet until you are ready to go to fiber channel. Honestly You will probably have a bottleneck because of the number of disk spindles you have, before the bottleneck becomes the bottleneck.

Thanks for the answer, Justin. We don’t know for the moment how many IOps SQL will require. The DM software is HP Autonomy, and in our case, a law firm, there are a lot documents to process. So SQL and Indexing are gonna be our main players in this matter.

As for the HDD config, we will have 24 600GB, 10k rpm, with multiple RAID5 arrays. I didn’t quite understand what do you mean by disk spindles.

In your opinion, it will be better to install VM’s directly on DL380’s and to use P2000 only to store data? Of course, in this case we will loose the benefit of the HA feature from VMWare. Have a nice day and thanks for your answer again.

I recently acquired similar hardware to virtualize an actual 30 servers datacenter. I have the following:

– 1x HP P2000 G3 SAS MSA Dual Controller SFF Array System, with 24x HP 146GB 6G SAS 10K

– 2x HP ProLiant DL580 G7 with HP SC08e 6Gb SAS HBA for ESXi servers.

– 1x HP ProLiant DL360 G7 for vCenter.

My problem? VMotion is not working. So need you very appreciate advice on how I correctly map of the volumes to create a cluster and be able to use VMotion?

Thanks in advance!

I will shoot you an email so that you can reply with some screenshots of the config.

In this scenario, is that Veeam server running ESX/ESXi too? Or is it just Veeam running on Windows?

Also, having the Veeam server connected via SAS makes for fast backups, but what if you want to have 4 VM hosts? If you connected the Veeam server to the regular data LAN, would it still be able to do everything you’d want?

Thanks,

Rob

Rob,

Veeam can run as a standalone windows host and take up one of your sas ports. Or you can run it as a virtual appliance. Virtual appliance mode has came along want since Veeam 4 and I do have some customers that run it that way … works pretty good. The other option you have would be to get SAS Switches from LSI… they make a 16 port switch. But honestly I think if you need more then 3 hosts (with as powerfull as a box is these days) then the P2000 might not have the horsepower to back it up.

Justin–

Thanks for the response. The environment I have in mind will be running about 20 VMs. Three beefy ESX hosts should be able to handle the load with redundancy no problem. They’ll be running another box with Veeam on it, and as long as there’s a free port on the controller I’m happy to get the performance benefit of backing up directly from the SAN. But I also would like to be able to say they can add a VM host down the road if they need to, and I want to make sure the Veeam backup can still work over the data network. Would it just be slower? Or would it not really work at all?

Justin,

We have been looking at the HP P2000, P4300 and the Dell PS6000 for vm solution. I have a loaner PS6000 that I have been playing with, but after reading this article and others online I think the P2000 will work just as well and I think we can get some pretty competitive pricing as we are getting another P2000 for a sql cluster setup. The one question I have is for the RAID on the P2000. If I was going to have 2-3 DL380 G7s with SAS connections to it, What is the recommended RAID setup for it? This is where I am not very familiar on the vm side. Do I do one big RAID10 with 22 drives, 2 hot spares. Then it is chunked up volumes inside that for each vm? do you have any info or links on the RAID/volume recommendations/setup? This is for our corp lan and would be about 10-20 vms across the servers. dc, file, test boxes, management tools, etc, nothing super powered. Thanks

Im not really sure that there are recommendations as far as raid setup, but lets start out with the first thing you asked.

Basically on the P2000 you take drives and create a vdisk (which is a raid group) then you create Logical Volumes (or LUNs) out of that. Then the LUNs are actually what become datastores.

If you want to see the P2000 interface or how you do all that just shoot me an email [email protected] and we can do a webex.

Also as far as how to create the vDIsks (number of drives) There are pros and cons.

If you do all in one vdisk you get lots of IOps for all your datastores, but the downside is that if something is hammering the raid group …. all datastores will be affected.

On the other hand if you create multiple vDisks and seperate the spindle count then you would have two areas, and if one was getting hammered it would only affect that raid group, but on the downside if you split the spindles up then your raid groups will only have half as many possible IOps as if they were all one big chunk.

Personally i would do two vdisks, but thats just me.

Justin,

Thanks for the offer, but I think I am good for now I think. I have checked out some online vids on youtube, and other places and it looks pretty straightforward on using the interface.

One thing I did come across, cant recall where, but I thought i saw a recommendation for least amount of volumes per vdisk as possible? But as you said, there are trade-offs both ways, 1 doesn’t kill all or less iops.

I think now I am just going to research into the optimal vdisk/volume setup for my two different scenarios (vm’s and sql). I already have found some good sql ones in relation to the P2000 on hp site.

Thanks for your input. lots of great info here

Have you used this configuration in a Hyper-v environment? Any gotchas?

Ich…hyper-what? Lol

Honestly until I get to see the next version I would never recommend hyperv for a production environment. But that’s just me…

Hello Justin and thank you for your blog !

On the ESX Hosts, do you need :

– Dell PERC H810 RAID Adapter for External JBOD, 1GB NV Cache 755 €

– or a simple SAS 6Gbps HBA External Controller 88 €

When you create raid5 volume, is it direcly on the P2000 or on the ESX Host raid card ?

i would purchase the HP controller and just put it in the Dell box …. that way you know it will work, the HP card is like 200 $ US

I stumbled upon your yesterday and it seems to be the exact info I have been looking for. We are a SMB with about 100 employees locally and 400 global. I am trying to make the jump to virtualized servers with SAN storage and while I have quotes for everything, management is having a tough time with a ~$100k investment.

Our biggest factor right now is storage size since we have about 11TB of used space across all of our servers and ~70% of that is RAW images that cannot be compressed. Currently we only have a single small SQL server for our engineering group but I am moving our Sharepoint server here at the end of the month and we are planning for a large SQL server to unify our analysis groups in the next 6-9 months.

I am looking at and have quotes for 3 iSCSI SANs for an HP P4300, P2000 and a Nimble solution. A “HP Architect” suggested the P2000 10gb iSCSI option. I am consolidating our servers into 2 DL380 G8s running VMWare. One of the big costs that I have and you seem to eliminate to a degree is the 2 switches at ~$15k.

Hi there,

This piece of documentation really is great, but still I would like to know if I could ask more questions about going into SAN with the G2000 G3 SAS.

Is there a way to contact you or mail you direct?

Thanks in advance,

Regards

Richard Werleman

ICT Department

Central Bureau of Statistics Aruba

I sent you an email.

Hey,

Until now, how have your experiences been? We’re looking into buying one of these units too.

What about direct connecting iSCSI ports from ESXi host into iSCSI ports on P2000 and not using ethernet switches? What is performance of that scenario of direct connect iSCSI vs. direct connect SAS?

@Glenn

We just deployed a new P2000 G3 SAS with two diskless DL160 hosts (each dual hex-core with 64GB RAM) and a DL380e backup server with local storage.

The P2000 has (8) 146G 15k drives in RAID 10 for the database and Exchange VMs, and (8) 600G 10k drives in RAID 10 for everything else.

Generally speaking… it flies. Backups are quick, too. We have 12 Windows VMs, including a couple of pretty high I/O databases. It’s been up a month now, with no complaints.

We can add another host without putting in SAS switches, which is great. We have eight free drive bays in the P2000, so we can easily add capacity there, too.

Rob

How does P2000 work with pooled storage compared to an EVA 4400?

Is the concept the same that is it one large pool? We are looking to buy 12x300GB drives for a SFF P2000 and with EVA 4400, we had always carved up a virtual disk and presented it to ESXi hosts. We noticed that performance dipped once we hit 75% of pool capacity being used and then knew we needed to add more disk spindles for more performance and re-level the data.