Why?

There are countless SAN vendors out there that work very well for VMware shared storage, there are also many linux distributions that provide iSCSI and NFS options for shared storage… So why use Windows Storage Server you might ask ? Well maybe you aren’t comfortable with Linux… maybe you don’t have the budget for a hardware SAN solution? There could be a number of reasons why, so lets just jump in and explain what Windows Storage Server has to offer as a shared storage platform.

- Windows Management Interface for GUI administration

- iSCSI (with MultiPathing)

- NFS

How.

Windows storage server is available from the MSDN site for download, but otherwise it is only available from OEM providers. Also because Windows installs are pretty much universal I wont go into detail on how to actually install Windows Storage Server. I will however note that if you do install from the MSDN media like I did you may be wondering why you weren’t asked for for a password. Well for some reason they decided that they were going to make a default password on WSS… that password is wSS2008!

Some hardware vendors that offer solutions for those without an MSDN account include the following:

http://www.aberdeeninc.com/abcatg/AberNAS.htm

Whats Next

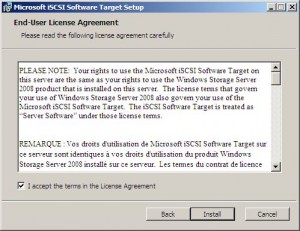

After you have a Windows Storage Server up and running you will need to get the iSCSI target tools CD. By default iSCSI support is not installed with the base operating system. To install the iSCSI target simply insert the CD and run the installer.

The installation is pretty simple, just your typical Agree to the EULA and hit next a few times.

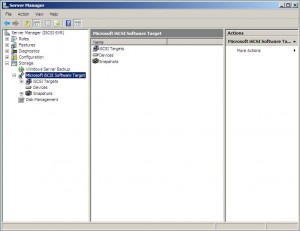

After installing you can right click on “Computer” and go to the Manage option. Under Storage there is a new option that you won’t find in a normal Windows installation called “Microsoft iSCSI Software Target”

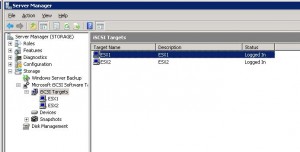

So for those who aren’t familiar with iSCSI or SAN’s here is a little background information. In order for VMware (or any other iSCSI initiator(client)) to see “storage” you need a target(server). Targets can then consist of one or more LUN’s (or virtual drives). Therefore the first step we should do is create a new target by right clicking on “iSCSI Targets” and selecting “Create iSCSI Target”

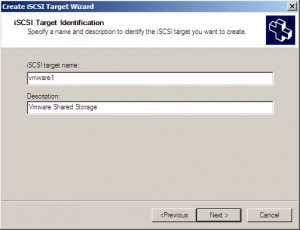

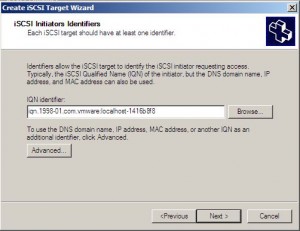

A wizard will appear and ask you to enter what you would like to call this target as well as enter one of the initiators IQN name. The IQN name is a unique name to the initiator that you are trying to connect to the target. If you open the initiator software a prefab IQN sequence is usually there. Just copy that into the identifier field. There isn’t a specific format that you must maintain for the IQN, just make sure it is something meaningful to you. Almost all initiators can be changed.

After clicking Next and then Finish you have your iSCSI Target. In most cases you will only need one target, the only reason that I can think of that would cause the need for two targets in a VMware environment is if you have certain hosts that need certain LUN’s and other hosts that should not see them. This is because all LUN’s in a target are either shared to a host or masked from it… depending on if you allow its IQN name.

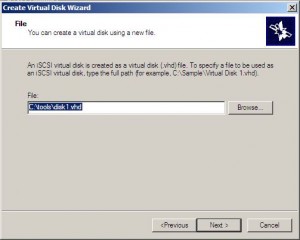

The next thing we should do is start to create LUN’s. Windows storage server creates LUN’s by presenting a VHD file to the initiator. So we right click on our iSCSI Target we just created and select “Create Virtual Disk for iSCSI Target”. The first question that you are asked is where you want the VHD to be located and what you want it to be called. You must type the full file name including the extension .VHD.

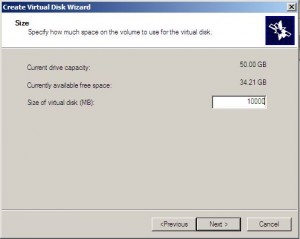

After clicking next we need to determine how big we want the VHD to be. Remember that this is in Mega Bytes.

Click Next and Finish and we are done…. Yes it is really that easy. The only thing else that I would recommend is that you turn off your Windows Firewall on the storage server. I suppose if you go through all the settings and find all the ports that iSCSI will be using you could leave it on, but if your VMware hosts are unable to see any targets and LUNs try turning off the firewall and rescan your HBA.

If you have more then one VMware ESX or ESXi server you will need to add it to the list of approved IQN names for this target otherwise you wont see it from that server. To do this right click on the iSCSI target name and go to Properties. After doing that navigate to the iSCSI Initiators tab and click Add. Put in the other servers IQN name and repeat for each server that needs access.

OR

You can also add another iSCSI Target and add the same storage to each target. This allows you to monitor each targets connectivity from the Windows storage mangement screen

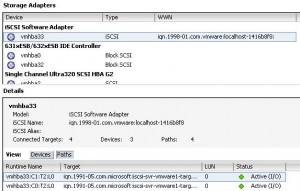

On the VMware ESX side all that we need to do is add the IP addresses from the windows storage server to the iSCSI Targets tab inside of the software initiator, then rescan the HBA… that’s it we are done. iSCSI storage from a windows storage server presented to VMware so that we can leverage features like vMotion and HA.

Tweaking

If you want to add some redundancy and some extra speed to your windows storage server that is sharing iSCSI targets you will probably want to add Multipathing. The process for adding multipathing is pretty simple.

- add a second network card to each of your VMware servers and to your windows storage server

- configure an ip address and subnet on the second network cards (this needs to be a new subnet that is not currently in use on your network, no gateway or dns or anything is needed in this subnet)

- add the new windows storage server ip address to the list of iSCSI targets in the VMware ESX/ESXi initiator properties.

- rescan the iSCSI HBA on your VMware hosts.

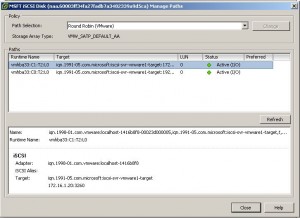

what you should see is that instead of having 1 path to your LUNs you will now have 2 paths to each LUN.

After you have multiple paths to each LUN and you have created a datastore from the new LUN’s you can right click on each of the LUN’s and change the Path Selection method from the default to the Round Robin method and click OK. You should now see “Active (I/O)” for each path now.

In order to bind multiple VMKernel NIC’s to the iSCSI software initiator you do need to run some commands from the esx/esxi command line. Please refer to the HP P4000 with VMware document in order to find the explanation on these commands.

If you have any questions leave a comment and I will try to address whatever they are. Thanks for reading.

![]()

Pingback: Tweets that mention Howto: Windows Storage Server for VMware Storage | Justin's IT Blog -- Topsy.com

Good post.

Any partiuclar reason to install all the WSS components and not just add the target software to an existing WinSrvr?

Have you done any performance testing with Native HW and compared to MS iniator vs. VMware initiator? I’ve seen a few posts suggesting poor performance with vmware but nothing from credible sources.

I was under the impression that the target software could only be installed on storage server. However I do not remember where I read that.

As far as HW vs VMware vs MS initiators … VMware has never left me down. That being said it does have limitations, like 2TB file systems. Just today actually I had to mount a LUN via the MS iscsi initiator so that it could grow beyond the 2TB limit. I think the biggest advantage of the Windows initiator is that you can install your SAN’s VSS plugins in the guest and if you do SAN snapshots you can properly backup data on the SAN LUN.

VMware has a very mature multipathing driver, and while I have not done tests to show which is better… my guess would be that performance is probably close enough in most situations that it will not matter. Obviously your mileage will vary, but I wouldn’t think there would be more then a 10% difference.

Sorry, I wasn’t clear. I’m trying to determine the MS target iSCSI ‘tax’? Some have pointed to the MS target SW and suggested it performs poorly (usually VMware forums). In the past, I’ve done a little benchmarking for Starwind and HP’s VSA by comparing performance of the 2.

I was interested to see if anyone had done anything of the like using the MS initiator. I noticed you referenced some iscsi vs. CIFS performance testing. I thought something along the same lines would be good but possibly using a benchmark tool from the WSS server to local disk, then same tool from an ESX guest to iscsi disk using MS initiator and possibly VMware initiator.

My past experience suggests the different targets are not so different in performance (HP VSA, Starwind, Datacore) until you get to a very fast storage system. Maybe a bit academic, but thier implementation of RAM for cache could make a big difference and yet they all seem to focus on features more than performance.It seems MS is in a great position to leverage the RAM, and I was hoping the WSS product might be enough of a reason. However, knowing they aquired the target SW, I’m not overly optomistic.

I think I can arrange a test like that. A co worker users WSS with the iSCSI target software to provide storage for his cluster. I will see if he can run a benchmark on the WSS and then again from a Virtual machine to see what the difference in performance is.

Normally high end SAN’s have ALOT of cache too… for example the MSA2000 G3 has 2GB per controller and its considered a low end SAN. I dont know the exact specs on the clariions we are selling but I’m sure its alot. Other vendors are also starting to take advantage of SSD’s as cache drives with auto storage tiering so that puts them off the charts on IOps compared to anything that can be build with SAS or SATA drives.

I will let you know how the benchmark of WSS comes out.

My Co-Worker Nick tested this out today. Here is a link to his post http://jpaul.me/?p=893

WOW. Thks for the testing.

I’ll post a follow-up at the referenced link.

Note, it would be great if I got an email when someone post a followup to this thread. I only noticed because I left my browser open to this page and refreshed.

I set this up today out in my home test lab with the intention of replicating my efforts at work on Monday.

Pointers that others may find helpful:

The TARGET is really the ESX HOST and it needs to be the FQDN if you use DNS vs IQN.

NOTE: DNS failure equals LUN failure.

Create the HOST first and then create the VHD by right clicking the host object. Microsoft does a poor job of explaining this part, and its helpful to have this knowledge ahead of time.

If anyone knows how to mount to a raw disk versus a VHD please reshare the information. I suspect you’d get better performance off of a raw LUN that was not using a VHD, but I could be wrong.

Windows > NTFS > VHD – versus – RAW > VMFS…Hmmm

I recently inherited a 12 TB Windows 2008 Storage Server with zero space used, so I am going to kick the tires on Monday when I go back to work and use my new knowledge.

Lucky for me I had the MSDN bits to teach myself at home, and you don’t need a full blown storage server to make it work. Just extract the iSCSI bits include with the MSDN Windows 2008 R2 Storage Server ISO image.

Thanks for this wonderful article.I tried this and it wonderfully worked.

Pingback: SHARED STORAGE MANAGER | Quality Products Blog

How would someone use this solution but build in the redundancy of a SAN? Could you build 2 of these and leverage VMWare and/or Windows features to replicate data across the 2 in near real-time?

Im sure it could be possible…. but you would be better off using the VMware Virtual SAN and local storage inside of the ESXi hosts for something like that …. or use the HP P4000 VSA software… both are good solutions for exactly that.

Justin, great article. I was using Windows Storage Server 2008 with ESXi 4.1. However, after upgrading to ESXi 5.0 I am having issues. Like the post here:

http://communities.vmware.com/message/1822142

Have you tested with ESXi 5.0?

NO I havent tested it yet, but nick runs Windows Storage Server for his vSphere cluster so ill make sure to mention it to him for when he tries to upgrade. We will let you know our mileage

Did you ever make WSS iSCSI target work with ESXi 5.0

Haven’t tried… is there a known issue? I plan to upgrade a co-workers cluster thursday evening and he is using WSS as his SAN

I have just about everything I can think of and I also had two other consultants give it a try. everything goes fine until you try to install a guess OS. Just for kicks i even tried installing a guest linux. I have given up at this time and I am currently using an iSCSI targe software from KernSafe. this is the first time I have tried iStorage from Kernsafe… sorry, i don’t mean to name drop, I have no interest in them. On a Bright note I saw a thread somewhere that said they had the VM 5.x, Microsoft iSCSI problem so they tried the MS Server 8 Developer edition with its iSCSI target software and it work great, but this does not help me for now in production.

We use Storage Server 2003 and 2008 in ESX5. VMware officially doesn’t support Storage Server 2003 on ESX5, but it works. i haven’t had any performance issues so i have never dug around in the logs, though. we may be seeing tons of errors and I simply don’t know about it. From what I can see we are up and running fine.

I do want to mention that we do the following :

– Add an additional VMkernel for each network card on the VMware host.

– Assign only one Network card to each VMKernel.

– Make sure the new VMKernels are active for the ISCSI HBA.

– Set the multipath policy on each ISCSI datastore to Round Robin.

we are using iscsi target on a win std ed box, so it’s not just for storage server. personally, i recommend staying away from storage server.

We have a problem where only one ESXi server can connect to a LUN at a time, do you know anything about this?

Actually, never mind. It looks like I had setup my LUNs correctly, the problem was with ESXi not connecting correctly through the GUI. I had to do this to mount the LUNs:

http://www.madeinengland.co.nz/call-hoststoragesystem-resolvemultipleunresolvedvmfsvolumes-for-object-on-vcenter-server-failed

We are considering using windows storage server, However, I have read sumwhere on the internet dat VMWare cannot access the VHD file that windows present… is this true? please clarify

vSphere 5 does not like the iSCSI targets that Windows storage server presents.

We use ESX5 with Windows Storage Server ISCSI targets with no problems. I guess “mileage may vary” applies here.

Wow, and its working ? I was getting all sorts of iSCSI time outs when we upgraded a friends stuff to 5.0 … but it was an early release of 5.0 maybe they have since fixed it.

Does it work with VI3? We havent upgraded as yet.

VI3?????!!!!!! holy cow man… what can i do to help you upgrade ? LOL

but yeah i think it will work but i havent played with VI3 in years

So what’s new with VMware 5.5 and using storage server 2008, 2008 R2, 2012 and 2012 R2?

What about using server with the iSCSI Target role installed?

I’m basically looking at some old gear and considering using for a DEV ESXi cluster as shared storage for DEV VM’s.

I’m not finding a lot about using windows to provide MPIO shared storage for ESXi via iSCSI.

I want to know if its worth the trouble to get it to work and if performance is any good.

Honestly I haven’t messed with it in a long time. I will try and spin some stuff up tomorrow and see what I can find out for you.

Hi Justin,

Thanks for the tutorial, very helpful.

I’m just having a play with this at the moment and downloaded the iSCSI target from MS but said installation wasn’t supported on Storage Server 2008 R2 Essentials so I had to edit the MSI using Orca to get it to install (for anyone else having this issue, download Orca and open the MSI, then look for ‘IsSupported’ and change the value to 0).

I’ve never used iSCSI before and have only limited experience with ESXi. I haven’t got the hardware yet for my ESXi machines so trying to do as much as I can before it arrives. Would I need to create a separate target for each VM or is a large disk presented to ESXi which can then be divided up as necessary for the VMs? Can more than one ESXi host use the same target?

Also, when setting the size for the target in the Windows end, is the space allocated during creation or dynamically as it’s used? And is it possible to resize the target later if more space is required?

Sorry for all the questions but any advice will be gladly received 🙂

Kind regards

Rob