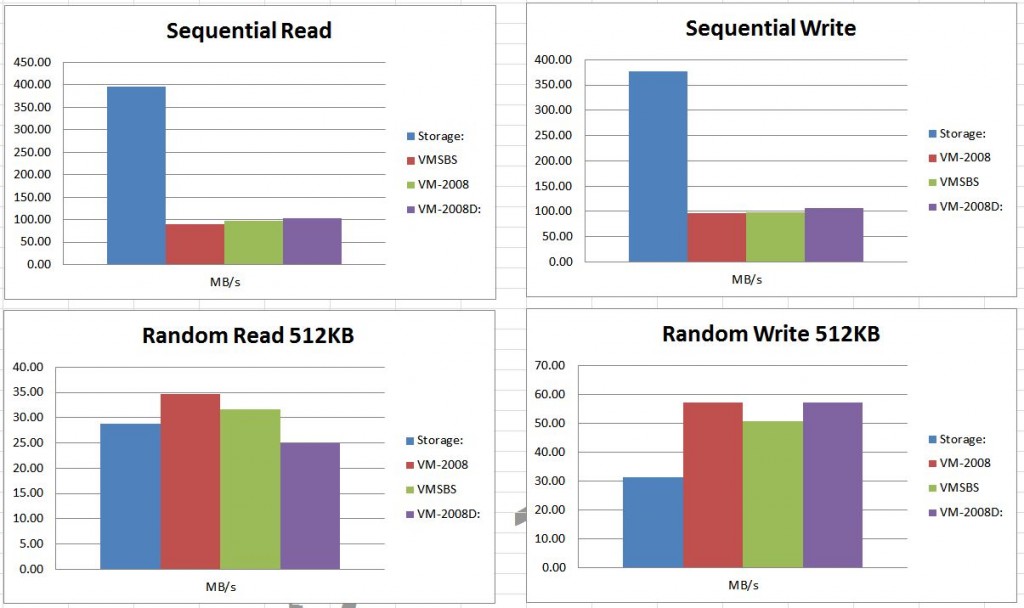

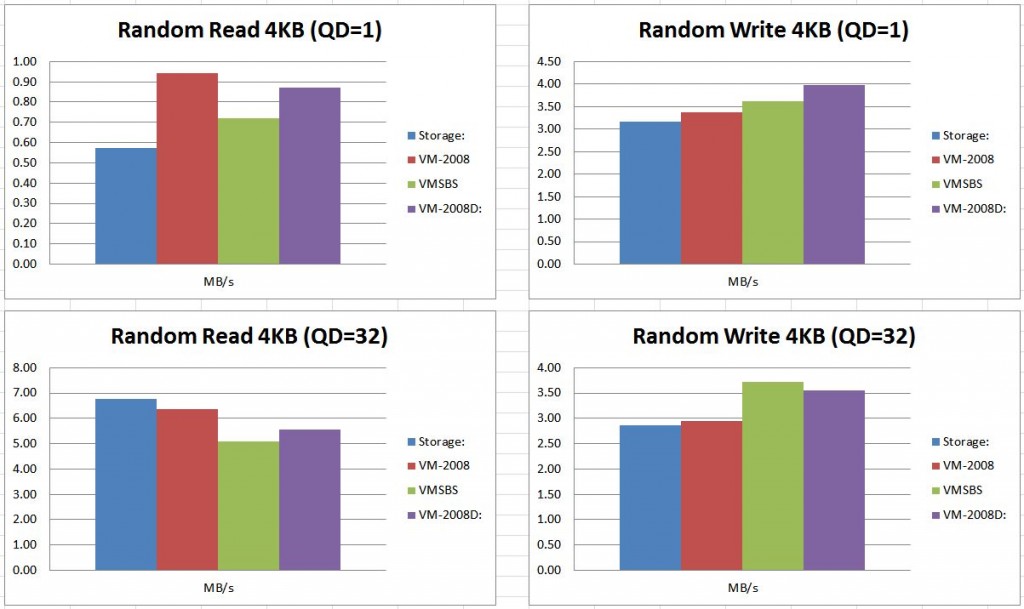

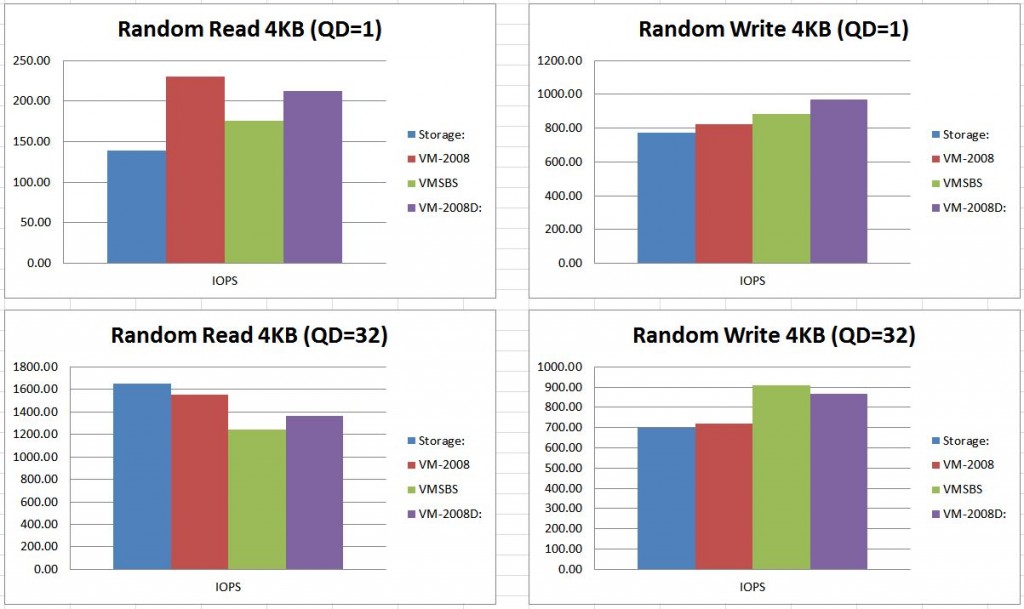

This blog post is to measure difference performance via different ISCSI paths to the same Microsoft Datastore.

Storage: Directly from the Microsoft Storage Server 2008. This is not an ISCSI connection, but running directly from the server providing all of the ISCSI connections Windows Server 2008 Storage Server Enterprise Edition SP1 [6.0 Build 6001] (x64)

VMSBS: 1Gb ISCSI connection through VMware in an SBS 2008 Production Server – Very small environment. Windows Server 2008 Small Business Server SP1 [6.0 Build 6001] (x64)

VM-2008: 1Gb ISCSI ISCSI connection through VMware in a fresh install Windows Server 2008 Enterprise Edition (Full installation) SP1 [6.0 Build 6001] (x64)

VM-2008D: 1Gb ISCSI connection directly from the system OS on a fresh install Windows Server 2008 Enterprise Edition (Full installation) SP1 [6.0 Build 6001] (x64)

(click on the image to enlarge)

I know a lot of charts. I am really shocked at the non-consistency! None of them showed as true performance champions.

All of these benchmarks were measured using Crystal DiskMark with the default settings.

![]()

Thanks for the testing. My takeaways…

Multipathing wasn’t functioning because you appeared to be hitting a 100 MB throughput limit which corelates a 1Gb link. Have you experimented with multipathing and WSS? I’m intersting trying to achive higher sequential throughput from vmware hosts to a WSS server but my inital results are similar to yours. LFN is less an issue because they distribute to traffic to multiple hosts leveraing multiple 1Gb links at the storage end.

Non-sequential and IOP performance results varied sometimes drastically with the iscsi iniitator often outperforming the ‘native’ WSS host which seems unlikely but possibly explained by the test tool or configuration. My brief investiagtion of the Crystal DiskMark tool suggests it posts only max results which are less consistent and less valuable for performance evaluation. An average results would be better. Have you considered iometer? You may want to consider using it and configuring it in a way commonly used for testing and comparing VMware servers.(http://communities.vmware.com/thread/197844)