One thing that hasn’t changed much in IT is the lack of bandwidth. It seems that no matter how fast of a connection you have, there is always wait time because of the large amount of data that you are trying to transfer. The same is true with Veeam Replication, while Veeam does to a great job of compressing and deduplicating blocks, replication times are always high when going across a WAN connection.

A customer that I work with had this problem, too much data for the WAN pipe that they currently have (30Mbps). So they were faced with a question… continue to add more bandwidth as replication times grew, or look into some alternative solution and avoid high WAN bandwidth costs. We chose the second option and decided to go with a WAN acceleration product. There are hundred of products of there for WAN acceleration, but after doing some research the product that Veeam recommends, and even bundles in some cases, is HyperIP by Nextex.

HyperIP is meant for someone who already has virtualization in place, their product (no matter how big or small) is distributed as a virtual appliance. This means that no matter where you start there will never be a forklift upgrade! Besides being a virtual appliance, HyperIP does some other stuff differently too, they do not cache blocks. For Veeam this is great, because after all how many times would you find a duplicate block after Veeam has Deduplicated ?

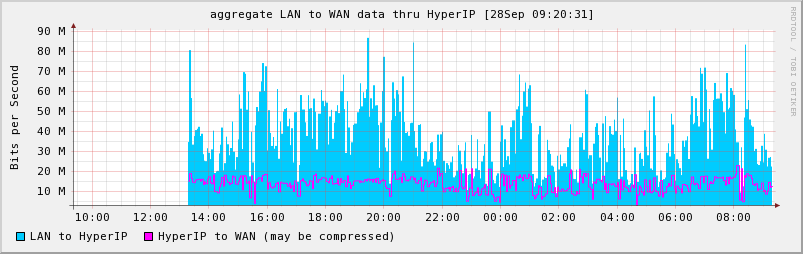

So lets get on to some pretty pictures and what HyperIP can actually do. Before we put HyperIP in place we were flooding the 30Mbps pipe almost 24 hours a day and replication jobs were sometimes taking more than 24 hours. After putting HyperIP in our traffic across that link dropped to about 10-15Mbps and our replication times went down, but only a small percentage.

The graph below shows the amount of data that Veeam was sending to our HyperIP appliance, the purple line is the amount of data that HyperIP sent across the WAN pipe to its counter part at the remote site. At the remote site the HyperIP appliance that receives the data then uncompresses the stream and sends all the traffic (the blue graph) to the ESXi host.

As you can see on average we are still only pushing about 30Mbps across to the remote ESXi host (because its receiving the blue amount), but we could technically do this with only 10-15Mbps of WAN bandwidth from our provider. This in itself is awesome, but for this customer the amount of data to replicate dictates that we need to be able to transfer about twice as much (without HyperIP it would be about 50-60Mbps) data as we are now.

So where is the bottleneck if its not the WAN pipe anymore? Look no further than ESXi, and if you search around on the Veeam forums you will find that they recommend replicating to ESX servers, instead of ESXi servers. Basically when you replicate to an ESX host Veeam utilized the Service Console and SSH to make the transfer faster. With ESXi you do not have the Service Console and it cannot handle data as fast as ESX.

Anyhow, we did install an ESX host at the remote location and were able to once again flood the WAN pipe, as for the amount of data from Veeam to HyperIP I cannot say at this time as we have no finished the testing. What I will say is that if you are looking for a boost for your remote replication speeds without upping your bandwidth HyperIP might just be your answer. Stay tuned as I’m sure I will have more posts to follow on HyperIP.

![]()

Justin, thanks for the writeup about HyperIP and Veeam. They certainly work well together.

HyperIP Marketing

Pingback: A Blog about a Blog, Can I do that? | HyperIP WAN Optimization Virtual Appliance for Replication and Backup Applications

This is very interesting, with ESX being retired do you know what plans Veeam has for the future? (will it be resolved/mitigated in v6?)

Yes I do know what they have in store for us, however some of what I know is under NDA yet. Although I think they have had webinars oh the new stuff… basically for optimal speeds you can utilize a VMware Proxy server (just a windows box with a service installed on it). I have tested this and I can tell you that I can pretty much flood a 100Mbps WAN circuit in a real world customer environment.