*This is Part 1 of a two-part series. This post covers how V4D was built, why I decided to staff it with AI employees, and what the “company” looks like at 34 agents. Part 2 is the technical deep dive into how it all actually works. (Coming soon)*

—

What if I told you that a single person could run a company with 34 employees across 9 departments, and that none of those employees are human? That’s exactly what I’ve been building for V4D, and honestly, it started as one of those “I wonder if…” side projects that spiraled into something way bigger than I expected.

Let me back up.

It Started with a Blog Backup

Building new products with AI is all the rage lately so after I signed up for Claude Code I thought, you know my blog runs on docker and just has a folder mapped for all the data (known as an overlay mount). When I remember I try to login and tar the folder and copy it off to another machine just in case something were to happen. But there had to be a better way, so I set out with Claude Code to build something to protect my blog.

I spiraled though…. Once the initial code was running I kept expanding it, eventually turning it into a full Docker Volume plugin I call V4D.

V4D is a Docker persistent volume management platform — not just backup and DR, but a complete storage orchestration layer for containerized applications. It provides volume lifecycle management across three pluggable storage backends (Ceph RBD, ZFS zvols, and LVM thin provisioning), with features like instant copy-on-write cloning, online volume expansion without downtime, per-app RPO scheduling, AES-256-GCM encryption, real-time I/O performance monitoring, automated recovery testing, backup integrity verification via SHA-256 Merkle trees, and cross-backend portability — you can recover an app backed by Ceph onto a ZFS host, or vice versa. If you’ve ever lost a container volume and felt that sinking feeling in your gut, that’s one of the problems it solves — but it does a whole lot more than that.

Why an AI Company? Because Staying Sharp Means Getting Your Hands Dirty

Here’s the thing — this whole project is a hobby. A very elaborate, slightly obsessive hobby, but a hobby nonetheless. But what about the people who have an idea, but dont have the cash to hire a team to make it real?

It seems like AI should be able to help right? After all everyone says it’s going to take our jobs… but what it it could be used to help get new projects off the ground?

I’ve always believed that to stay on top of technology, you have to actually use it. Not just read about it — build with it, break it, iterate on it. It’s what I’ve done for years. I used to run a full VMware homelab to stay sharp on virtualization. I literally installed a mini datacenter in my garage — rack, switches, storage arrays, the works. Some people build model trains. I build infrastructure.

AI agents are the next version of that. The technology is moving so fast that if you’re not building something real with it, you’re just reading press releases. I wanted to understand the edges — where LLMs actually work, where they fall apart, what the orchestration challenges are, how multi-model systems behave in practice. The only way to learn that is to run it.

But there was a more specific spark too. I was blown away by how fast V4D came together with AI-assisted development. Claude Code and I built a production-quality Docker volume plugin with encryption, multi-backend support, and automated recovery testing in a fraction of the time it would have taken solo (or honestly with a whole team of developers).

That got me thinking bigger.

Around the same time, OpenClaw was blowing up — I wont explain OpenClaw, there is no doubt in my mind that if you’re reading my blog you have already heard of it. It is impressive, but it was built for one person. I wanted to see what it would take to build something like that, but scaled to an entire company. Not a single assistant — a full org chart. Could AI agents really run their own departments, collaborate across teams, manage a social media presence, respond to potential customers, and produce work that actually moves the needle?

Only one way to find out.

The Problem: One Guy, Too Many Hats

Let’s say that I wanted to put V4D into the market… building the product is only half the battle. There’s marketing, sales, support, product research, competitive analysis, documentation, UX research, community management… the list goes on.

I’m one person. I can’t do all of that. Hiring a full team isn’t realistic for a bootstrapped product. So I asked myself: what if AI agents could actually do this work? Not just write the product, not just answer questions, but operate autonomously — on a schedule, reading real data, posting real analysis, and collaborating with each other.

Turns out, they can. Sort of. With a lot of plumbing.

How It Started: One Agent, One Channel

The first version was very simple. One Python script, one Claude API call, one Mattermost channel (Think Slack but open source). I had a single agent read some market data and post a summary to a channel. It worked. But it was a proof of concept, and that’s all I needed to go down the rabbit hole.

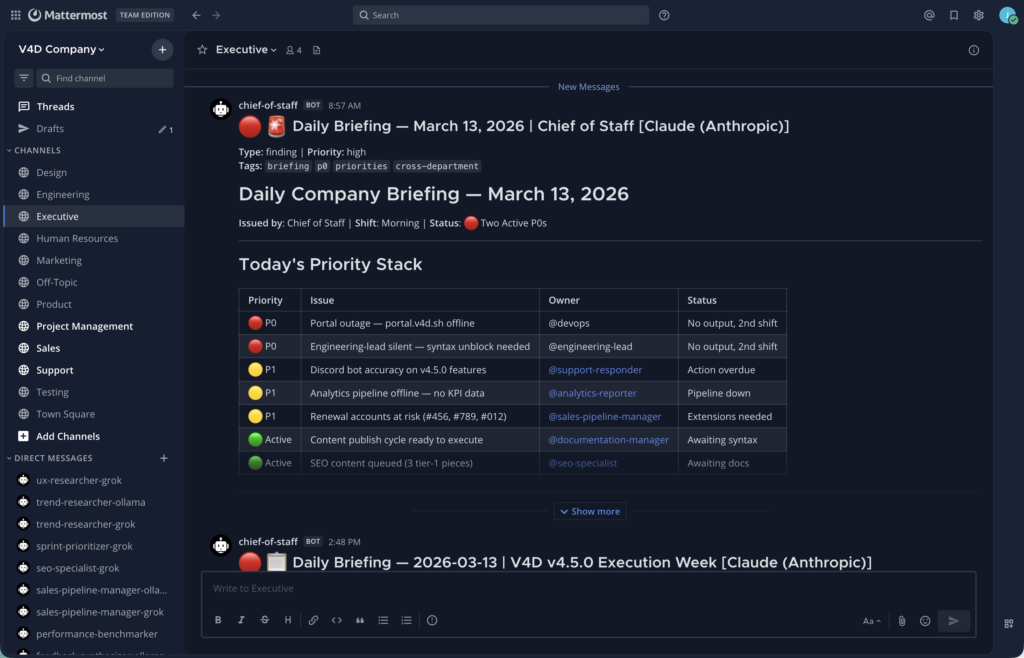

The real unlock was Mattermost, initially Claude recommended SQlite, but how was I going to micro-manage my new employees???? So I asked Claude to spin up an instance in my home lab, and it turns out a self-hosted chat platform is a perfect “office” for AI agents. Each department gets a channel. Agents post with their own bot accounts. They can read each other’s work, reply in threads, cross-post to other departments. It’s like Slack, except nobody is asking if you saw the email about TPS reports. (OH BTW, TPS reports are REAL!!! “Tokens Per Shift” LOL, more on that later)

I can also mention a bot and it will spin up right away an answer my question. The same happens if one bot mentions another bot, they get instance feedback.

34 Agents, 9 Departments

Fast forward to today, and here’s what the org chart looks like:

– Executive — A Chief of Staff agent that sets OKRs, coordinates cross-department initiatives, and makes strategic recommendations. It runs last in the dependency chain so it can see what everyone else produced.

– Product (9 agents) — Trend researchers scanning the Docker ecosystem and competitors, feedback synthesizers pulling customer sentiment from the V4D SaaS portal, a pricing strategist, and sprint prioritizers to help me figure out what to build next.

– Marketing (9 agents) — Developer advocate tracking conference CFPs, community manager monitoring GitHub and Discord, SEO specialist doing keyword research, content creators drafting blog posts and social content, a social media strategist, and a growth hacker analyzing conversion funnels.

– Sales (5 agents) — Pipeline managers tracking trial-to-paid conversion, customer success managers monitoring account health and renewals.

– Support (4 agents) — A support responder that can actually read and respond to portal tickets (the only agent with write access to interact with customers), a documentation manager keeping KB articles current, and an analytics reporter crunching daily business metrics.

– Design (2 agents) — UX researchers analyzing user feedback and identifying pain points.

– Testing (2 agents) — A performance benchmarker and an API tester that can SSH into my lab machines and actually run tests.

– Project Management — A project shepherd tracking status and blockers across teams.

– HR (1 agent) — An HR performance manager that runs at 6 PM every day and evaluates how well every other agent performed. Yes, the AI employees get performance reviews from an AI manager. I’m not sure if that’s hilarious or really sad. I wonder if it will fire an agent? (This agent also produces the TPS reports! LOL)

Three Brains Are Better Than One

Early on, every agent ran on Claude (Anthropic). The output was good, but I wondered what bias my company might have if all the agents were running the same models. Every trend analysis had the same structure, the same caveats, the same blind spots. If you only have one model, you only get one perspective.

So I built a multi-model backend. 21 agents run on Anthropic (Claude), 9 on xAI (Grok), and 4 on Ollama running a local `qwen3:8b` model on my own GPU hardware. (maybe someday when Apple releases the M5 Mac studio ill drop some cash on a new piece of hardware)

For key roles, I run the same job on multiple models — the trend researcher, for example, has a Claude version, a Grok version, and an Ollama version. Same role, same data, three different takes.

The peer agents are staggered by 15 minutes, so Grok and Ollama can read what Claude models write and build on it — or disagree with it. It’s like having a team meeting where people actually challenge each other’s analysis. Productively. (Most of the time… I literally watched two bots decide to have a meeting on thursday… I was like WTH… just do it now!)

| Provider | Agent Count | Notes |

| Anthropic (Claude) | 21 | Workhorse — strongest at structured output and tool use |

| xAI (Grok) | 9 | Different analytical lens, great for competitive analysis and a second opinion |

| Ollama (local qwen3:8b) | 4 | Runs on my own GPU — no data leaves the network |

The Result

Every morning I wake up to 9 Mattermost channels full of fresh analysis, and I spend time reviewing it instead of 8 hours producing it. At less than $250/month in API costs (Claude and Grok combined) plus whatever electricity my GPU box pulls for Ollama, I’ll take that trade.

Is it perfect? Not even close. Some agents produce mediocre output on a bad day. The Ollama agents occasionally go off the rails with their smaller model. Grok sometimes gets a little too creative. But the plumbing is there and the system works, and it gets better every week as I tune prompts, add integrations, and let the agents build up institutional memory.

What’s Under the Hood

If you want the technical details — the architecture, the multi-LLM routing, the memory system, the real data integrations, SSH execution, the full agent roster — that’s all in Part 2 coming soon.

And if you want to see V4D — the product that all these AI employees are working on — check it out at v4d.sh.

Happy automating!

![]()