One of the things you have to do when building out your VMware cluster is size the amount of memory (RAM) you need. From what I have seen most people assume that by adding up the total amount of RAM they have on their physical servers they will get the total amount of memory that is required for their cluster; those people are wrong.

What they have forgotten about is the overhead that VMware has on their requirement. Not only does the hypervisor require memory to run, but also for each virtual machine you have powered on memory is required for management overhead.

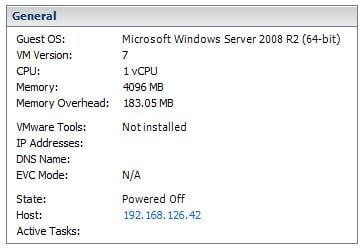

If you already have an ESX host up and running you can check the amount of overhead different virtual machines require by creating a new virtual machine and assigning it the specs that are in question. Then on the Summary tab look for “Memory Overhead”. In this example a virtual machine with 1 vCPU and 4GB of RAM would consume 183.05MB of RAM for overhead purposes. If I increase the number of vCPU’s to 2, then it jumps to 245.61MB. The same is true with RAM, if I put the vCPU back to 1 and move RAM to 8GB instead of 4GB the overhead goes back up to 247.39MB.

If you already have an ESX host up and running you can check the amount of overhead different virtual machines require by creating a new virtual machine and assigning it the specs that are in question. Then on the Summary tab look for “Memory Overhead”. In this example a virtual machine with 1 vCPU and 4GB of RAM would consume 183.05MB of RAM for overhead purposes. If I increase the number of vCPU’s to 2, then it jumps to 245.61MB. The same is true with RAM, if I put the vCPU back to 1 and move RAM to 8GB instead of 4GB the overhead goes back up to 247.39MB.

As you can see you are talking between 200-250MB per virtual machine of RAM overhead, if your cluster has 20-30 virtual machines with various sizes you could be talking as much as 7.5GB of RAM!

Another thing to take into consideration is the virtual machines that you will add in order to run vSphere. Right now vCenter Server runs on a 64bit virtual machine, and its stated that the minimum RAM is 4GB. However if your vCenter server is doing other things, such as running a local version of SQL Express, VUM, vCenter Converter, or other tasks; you could easily need 8GB of RAM just for vCenter.

RAM is almost always the limiting factor in a VMware cluster design. Mostly because it is a static assignment once the virtual machine boots up… its not like CPU power where a VM will use it for a second then let it go… once you assign RAM to a virtual machine it keeps it (except when ballooning). The other thing that hurts RAM usage on virtualization projects is scope creep.

Because you have the ability to spin up a virtual machine so quickly and easily it seems logical to start separating server functions out into their own isolated environments. The problem is that when we start to run more copies of Windows, we require more RAM. So you really have to be smart and think ahead when calculating the amount of memory you’re going to get.

If you want to be on the safe side, your best bet would be to calculate the amount of memory you have in your physical machines. Then that number and triple or even quadruple it! For example if your current physical server infrastructure has 50 servers, each has 4GB of RAM and will require 1vCPU’s. We already know that we can fit this workload on 3 physical servers ( this was determined by using our pretend Capacity Planner results). So we will need 200GB of RAM to equal what we have in the physical boxes now. Next we need to calculate overhead for those VM’s…it comes to about 9.2GB. Then we add in the memory we need to run vCenter in a virtual machine (8GB plus 342MB), and also figure 1GB for ESX or ESXi per server.

So total for RAM we are thinking about 218GB to run everything… so now we can divide by 3 (because we have 3 servers), this comes out to about 73GB per physical machine. However if we lose a box we have to compensate for that so add in another 73Gb of RAM spread across 3 hosts (so about 14GB per physical).

So we started out saying that we needed 218GB of RAM to match our physical servers… best case after recalculating that number knowing what the overhead is we are now at 292GB…so figure 100GB of RAM per server.

Bottom line… the fastest swapping rate is the one that isn’t needed… So always buy more then enough RAM… you will use it sooner or later.

![]()

Hi, you say how to size memory for servers/cluster, but you don’t regard tps and memory compression. These methods should have to reduce the necessary memory, isn’t it??

Matteo

Yes, those features will reduce memory usage, however I’ve never seen tps save more than a couple gigabytes. As for compression, I don’t think it kicks in until there is contention… At least that’s what I think I read in the vsphere ha / drs deep dive book. I will check into it and let you know. But either way since it’s always better to plan for a cluster with excessive memory then too little memory, I would consider the memory that those features save a bonus.

Thanks, useful article.

If you get a chance please talk about swap memory as well how to configure that….

Do you mean the ESX level of swapping ? or inside of VMware virtual machines ?

Hi Justin,

How do you calculate the memory overhead at the planning stage?

I can’t seem to find any documentation with a formula for calculating this?

VMware has a chart for vsphere 4… i was thinking that it was slightly less on vsphere 5, but if you figure it at the vsphere 4 numbers you will be safe

http://pubs.vmware.com/vsp40_i/wwhelp/wwhimpl/common/html/wwhelp.htm#href=resmgmt/r_overhead_memory_on_virtual_machines.html#1_7_9_9_10_1&single=true

Pingback: Dimensionamento infrastruttura virtuale VmWare vShpere 5.1

Hi Justin,

I enjoyed this article thoroughly. Marvelous I should say.

One query here – you said – “For example if your current physical server infrastructure has 50 servers, each has 4GB of RAM and will require 1vCPU’s”.

Do you mean 50 VM servers or physical servers? (I got confused as you said “physical server infrastructure”)

i meant if you had 50 physical servers that you were converting into virtual machines…. meaning you could consolidate those 50 down to 3

Hello.

i think there is something weird in that formula :

“However if we lose a box we have to compensate for that so add in another 73Gb of RAM spread across 3 hosts (so about 14GB per physical).”

if you need to handle 218 GB with three host and tolerate 1 host failure (2 remaining) you need to have 218 / 2 (adding to that some granularity because memory for virtual server cannot be be split ) 😉

Vheers

Agree with Massimo, should be total memory required /2 added extra to each host if you have a 3 host cluster

Hi, my host ( sun sparc X4100) with sun solaris and Esx Vmware ESX 3.5, has 64 GB RAM, 16 CPus, 500 GB Hard disk.

I create 45 Virtual Machines with windows XP, 1.37 GB RAM each and 20GB HDD.

For 30 VM simultaneous use, how much memory (RAM) and how many CPUSi must give to each virtual machine for maximum power?

the answer to your question depends on a lot of things. General rule of thumb says only give each VM 1 vCPU unless they demand more (meaning they are running at 100% utilization).

As for RAM… it will be over committing ram, so make sure to install vmware tools so that VMware can balloon when needed.

http://vmware-sizing.vnshell.net/ sizing tool

Thanks for the link. Next time you should include a little description so that people know where you are sending them.